Study of Multivitamin Use and Memory

Routine multivitamins are likely not the best strategy for cognitive effects.

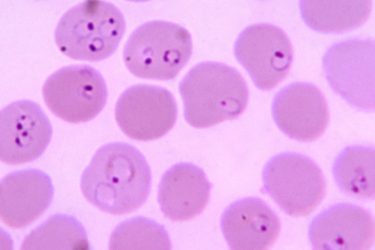

A New Malaria Vaccine

A second anti-malarial vaccine in just two years could be a game-changer for this deadly disease.

But Is It Real?

Why we need more science in medicine.

Healthcare Costs of Air Pollution

More recent estimates of the health related costs of air pollution are staggering.

Brain Stimulation for Memory

Brain stimulation for memory is interesting science, but don't believe the premature claims and hype.

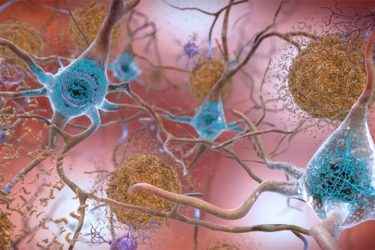

Fraud, Scientific Rigor, and Alzheimer’s Research

A stunning case of possible fraud in Alzheimer's research reinforces the need for scientific rigor at every level.

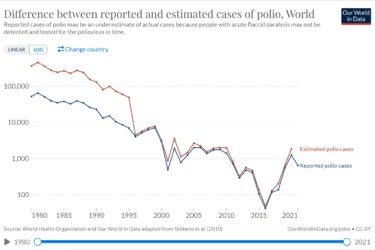

The Return of Polio

Once again we lose the chance to eradicate polio, but the goal still remains relatively close.

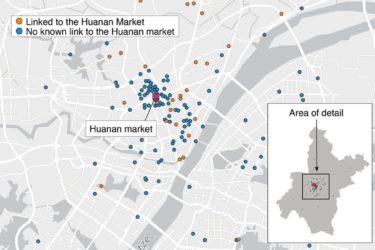

New Studies on the Origin of COVID-19

New evidence strongly supports the conclusion that SARS-CoV-2 emerged from the wet markets of Wuhan, killing the lab-leak hypothesis.

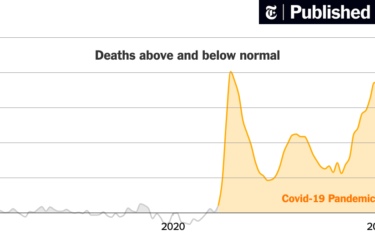

Excess in Non-COVID Deaths

What are the true causes of the excess non-COVID deaths during the pandemic?

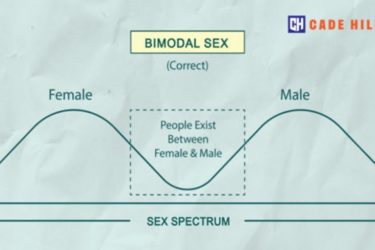

The Science of Biological Sex

What does the science actually say about biological sex?