Category: Clinical Trials

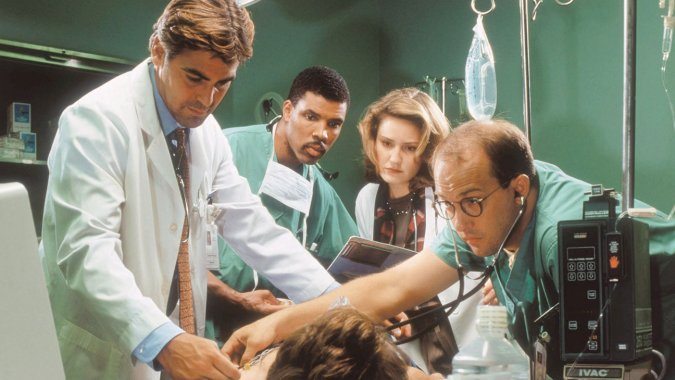

On the pointlessness of acupuncture in the emergency room…or anywhere else

As incredible as it seems, advocates of "integrative medicine" are on the verge of creating a new specialty, emergency acupuncture. I wish I were joking, but I'm not.

Acupuncture and Endorphins: Not all that Impressive

I was reading, and deconstructing, a particularly awful bit of advice for acupuncture by Consumer Reports. It was the same old same old, but it was the source that made it particularly awful. I expect more from Consumer Reports than the uncritical regurgitation of the standard mythical acupuncture narrative. The report included the quote One possible reason for the benefits of acupuncture:...

Forget stem cell tourism: Stem cell clinics in the US are plentiful

It's generally thought that quack stem cell clinics are primarily a problem overseas because the FDA would. never allow them on US soil. As a new survey shows, that assumption couldn't be more wrong.

A systematic review about nothing

There is dubious content in PubMed that you won’t find unless you look for it, or stumble across it inadvertently. It’s the entire field of alternative medicine which is abstracted and complied along with the actual medical literature. In this world, the impossible is accepted as fact, and journal articles focus on the medical equivalent of counting angels on pinheads. I’ve been...

What’s the harm? Stem cell tourism edition

Stem cells have become big business. Offshore clinics claim to use stem cells to treat anything from aging, diabetes, stroke, cancer, and even autism, all without compelling evidence that these treatments have any meaningful effect. Unfortunately, the potential for harm, both financial and to health, is high, as the case of Jim Gass demonstrates.

Whither the randomized controlled clinical trial?

With the rise of precision medicine and genomics, the conventional randomized clinical trial appears more and more outdated. Fortunately, clinical trials are evolving, but will it be enough to incorporate the numerous advances in "-omic" medicine in a rigorous scientific manner to benefit patients?

False balance about Stanislaw Burzynski and his disproven cancer therapy, courtesy of STAT News

One common theme that has been revisited time and time again on this blog since its very founding is the problem of how science and medicine are reported. For example, back when I first started blogging, years before I joined Science-Based Medicine in 2008, one thing that used to drive me absolutely nuts was the tendency of the press to include in...

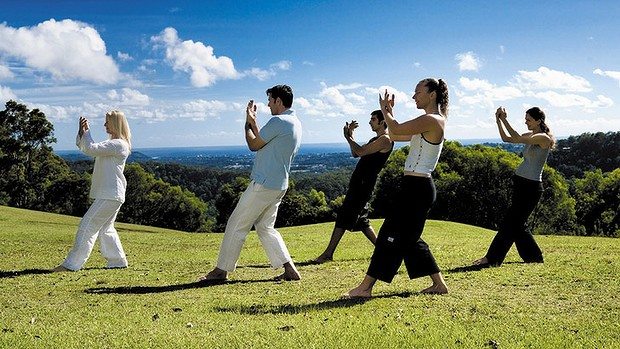

Tai Chi versus physical therapy for osteoarthritis of the knee: How CAM “rebranding” works

“Complementary and alternative medicine” (CAM), now more frequently referred to as “integrative medicine” by its proponents, consists of a hodge-podge of largely unrelated treatments that range from seemingly reasonable (e.g., diet and exercise) to pure quackery (e.g., acupuncture, reiki and other “energy medicine”) that CAM proponents are trying furiously to “integrate” as coequals into science-based medicine. They do this because they have...

Chiropractors, Blind Pigs, and Acorns

When people are at the end of their life they like to pass on their life lessons. One thing I have never had a patient say is “Doc, I sure wish I had spent more time at work.” I try and keep that in mind, but then there are those work commitments that are hard to avoid. I need to have a...

Acupuncture does not work for menopause: A tale of two acupuncture studies

Arguably, one of the most popular forms of so-called “complementary and alternative medicine” (CAM) being “integrated” with real medicine by those who label their specialty “integrative medicine” is acupuncture. It’s particularly popular in academic medical centers as a subject of what I like to refer to as “quackademic medicine“; that is, the study of pseudoscience and quackery as though it were real...