Your health insurance plan probably covers anti-inflammatory drugs. But does it cover acupuncture treatments? Should it? Which health services deliver good value for money? Lest you think the debate is limited to the United States (which is an outlier when it comes to health spending), even countries with publicly-run healthcare systems are scrutinizing spending. Devoting dollars to one area (say, hospitals) is effectively a decision not to spend on something else, (perhaps public health programs). All systems, be they public or private, allocate funds in ways to spend money in the most efficient way possible. Thoughtful decisions require a consideration of both benefits and costs.

One of the consistent positions put forward by contributors to this blog is that all health interventions should be evaluated based on the same evidence standard. From this perspective, there is no distinct basket of products and services which are labelled “alternative”, “complementary” or more recently “integrative”. There are only treatments and interventions which have been evaluated to be effective, and those that have not. The idea that these two categories should both be considered valid approaches is a testament to promoters of complementary and alternative medicine (CAM), who, unable to meet the scientific standard, have argued (largely successfully) for different standards and special consideration — be it product regulation (e.g., supplements) or practitioner regulation.

Yet promoters of CAM seek the imprimatur of legitimacy conferred by the tools of science. And in an environment of economic restraint in health spending, they further recognize that showing economic value of CAM is important. Consequently they use the tools of economics to argue a perspective, rather than answer a question. And that’s the case with a recent paper I noticed was being touted by alternative medicine practitioners. Entitled, Are complementary therapies and integrative care cost-effective? A systematic review of economic evaluations, it attempts to summarize economic evaluations conducted on CAM treatments. Why a systematic review? One of the more effective tools for evaluating health outcomes, a systematic review seeks to analyze all published (and unpublished) information on a focused question, using a standardized, transparent approach to evidence analysis. When done well, systematic reviews can sift through thousands of clinical trials to answer focused questions in ways that are less biased than cherry-picking individual studies. The Cochrane Review’s systematic reviews form one of the more respected sources of objective information (with some caveats) on the efficacy of different health interventions. So there’s been interest in applying the techniques of systematic reviews to questions of economics, where both costs and effects must be measured. Economic evaluations at their core seek to measure the “bang for the buck” of different health interventions. The most accurate economic analyses are built into prospective clinical trials. These studies collect real-world costs and patient consequences, and then allow an accurate evaluation of value-for-money. These types of analyses are rare, however. Most economic evaluations involve modelling (a little to a lot) where health effects and related costs are estimated, to arrive at a calculation of value. Then there’s a discussion of whether that value calculation is “cost-effective”. It’s little wonder that many health professionals look suspiciously at economic analyses: the models are complicated and involve so many variables with subjective inputs that it can be difficult to sort out what the real effects are. Not surprisingly, most economic analyses suggest treatments are cost-effective. Before diving into the study, let’s consider the approach:

What do you mean, cost-effective?

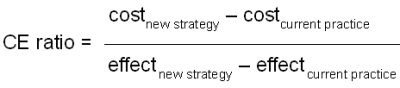

Whenever you hear any treatment described as “cost-effective”, you should immediately ask, “Cost-effective compared to what?” Cost-effectiveness evaluations consider incremental costs and incremental benefits, and cannot be considered in isolation:

The “CE ratio” is the effective additional cost for the additional benefit offered by a new strategy or treatment. If the new strategy provides better outcomes at the same or lesser cost as the current practice, then the new strategy is said to be “dominant” — that is, it should be accepted immediately. If the new strategy provides worse outcomes at a higher cost, then current practice is said to dominate — the new strategy should be abandoned. Few studies are this simple, however. Most published economic analyses tend to show interventions that claim better efficacy at a higher cost. (For an example, see the economic evaluation of virtually every new drug launched in the past 30 years.) If the CE ratio is low, the treatment is said to be “cost-effective” — there’s a lot of benefit for a modest additional cost. If the incremental cost is high, relative to the incremental benefit, the CE ratio is high, and the new strategy is deemed “cost-ineffective”. By now it should be clear that what’s cost-effective is ultimately a judgement call. What’s felt to be cost-effective might be influenced by how much money you have to spend, or how you prioritize your spending.

Given the number of variables in an economic analysis, the opportunity for bias is high. It all depends on the design. Some of the major considerations include:

What is the comparator? All cost-effectiveness evaluations compares one intervention to another. Substituting homeopathy for antibiotics for the treatment of colds may be a cost-effective intervention. After all both are ineffective, and homeopathy may be less expensive than antibiotics. What’s further, there is the risk of harm from adverse events due to drug, while homeopathy is inert, without medicinal ingredients. Does that mean homeopathy is “cost-effective” for colds? Not quite. Giving “no treatment” for a cold is superior to (or “dominates”) both antibiotics and homeopathy, as it is both safe and free of cost. If the comparator is inappropriate, and any derived cost-effectiveness estimate is irrelevant.

Just how effective is the intervention? In general, effective interventions tend to be cost-effective. Some are even cost saving. Vaccines are one example. Interventions, science-based or otherwise, that offer modest and very incremental benefits, may be deemed a cost-ineffective use of resources. Screening programs, for example, can be clinically effective, but tremendously cost-ineffective when you factor in harms of false-positives. Again, the best evidence for efficacy usually comes from prospective, randomized trials. Evidence can be derived from other settings, and there may be very good reasons why randomized data is unavailable (once again, vaccines are an example of this). However, this needs to be factored into the evaluation when considering overall effects, and the risks of bias. So when we’re looking at interventions, we need to consider not only the trial from which the economic evidence is derived, but the totality of evidence for that intervention. There are multiple studies that suggest that acupuncture has clinically meaningful effects. But the best and most comprehensive studies repeatedly conclude that acupuncture’s effects are placebo effects. If there is any doubt about the clinical effect of the intervention, then the economic analysis must reflect this. Otherwise, the value-for-money evaluation will be hopelessly biased. Recall the study of acupuncture for asthma that observed patient-reported improvements, but no objective improvements in control. Depending on what endpoints you base your economic model on, your conclusion will be very different.

How realistic is this intervention? Like any other clinical trial, data from research must be translated into the real world. If the interventions can be offered in the real world in a way that’s consistent with the trial, and the real-world patients are similar to those studied, we’d generally agree that a particular study is generalizable. As we move further away from the type of intervention studied and the types of patients that participated, the relevance of the clinical study to the real world becomes less clear. Patients in clinical trials behave differently than those in regular, everyday treatments. When we move from highly controlled trials to more pragmatic studies, we generally expect treatment effects to decline. This isn’t the case with CAM — rigorous studies show a lack of effect, while more “pragmatic” trials, studying real world use, are more likely to report benefit. While the treatment setting may be more realistic, the ability to distinguish objective effects from subjective effects may be more difficult.

What is the type of analysis? Different models answer different questions. Cost-effectiveness analyses compare the costs and effects of different treatments, when you can measure the effect objectively. Cost-benefit analyses are used to answer questions about overall benefits in a population, such as public-health interventions. Cost-utility analyses require measurement of effects that can estimate “quality-adjusted life years” or QALYs.

These are the big four, but there are several other areas that need to be scrutinized in an evaluation, including the accuracy of the measurement tools used, the assignment of accurate costs to different outcomes, and the overall sensitivity of the model to small changes in estimates. The key point to remember is that with so many knobs and buttons, any economic analysis can make a health treatment look good — especially if the clinical evidence it’s based on is favorable.

Given the variables that need to be examined, economic analyses don’t lend themselves systematic reviews, which are designed to synthesize all relevant information to answer a focused clinical question. And that seems to be the case with the paper in question. These authors attempt to use the framework of a systematic review to examine the economic evidence supporting CAM treatments. The lead author is Patricia Herman, a behavioral scientist at the RAND corporation who is also a naturopath. The second author is Beth L. Poindexter, also a naturopath, with no other publications cited in PubMed. Claudia Witt is a physician who holds multiple academic positions in complementary medicine and alternative medicine. She has numerous publications in her CV including studies of homeopathy for chronic illness. The final author is Dr. David Eisenberg, a medical doctor who is a proponent of CAM and naturopathy in particular. None of this disqualifies the authors or their economic analysis of CAM. However, it does raise concerns about the potential for bias to be present in the analysis.

The review attempts to identify all published economic evaluations of CAM, (which they call CIM, for complementary and integrative medicine). The authors point out that CAM is (not surprisingly) not funded by insurance plans:

In not being part of conventional medicine, individual complementary therapies and emerging models of integrative medicine (ie, coordinated access to both conventional and complementary care)—collectively termed as complementary and integrative medicine (CIM)—are often excluded in financial mechanisms commonly available for conventional medicine,2 and are rarely included in the range of options considered in the formation of healthcare policy. The availability of economic data could improve the consideration and appropriate inclusion of CIM in strategies to lower overall healthcare costs. In addition, economic outcomes are relevant to the licensure and scope of practice of practitioners, industry investment decisions (eg, the business case for integrative medicine), consumers and future research efforts (ie, through identifying decision-critical parameters for additional research10).

This is a textbook example of begging the question, assuming that CAM is being excluded from strategies to lower healthcare costs, without explaining why these treatments are not considered “conventional medicine,” and without demonstrating that these treatments actually lower overall healthcare costs. So the authors stated objective is to catalog the economic evidence that does exist.

The authors admit (and I agree) that search strategies for CAM in the published medical literature are difficult. It’s complicated in part by the industry’s decision to keep redefining itself — so what was labelled “alternative” is now “integrated” or “collaborative”. They exclude all treatments considered to be “standard treatment” or “delivered by conventionally credentialed medical personnel”. That is, they attempt to identify treatments which are either not proven to work, or proven not to work. The net result is 204 papers published between 2001 through 2010. The reviews span the CAM spectrum:

- 45 were manipulative therapies (e.g., chiropractic) for a range of conditions

- 41 were acupuncture treatments

- 38 were studies of “natural” products

- 27 were for forms of “mind-body” medicine

- 24 were for homeopathy

- 18 were for general CAM interventions

- 25 were for other CAM therapies, including “aromatherapy, healing touch, Tai Chi, Alexander technique, spa therapy, music therapy, electrodermal screening, clinical holistic medicine, naturopathic medicine, anthroposophic medicine, water-only fasting, Ornish Program for Reversing Heart Disease, use of a corset and use of a traditional mental health practitioner.”

Unfortunately, in no part of the analysis is the veracity of the underlying clinical data questioned. The authors applied different economic analysis quality checklists, narrowing the list to 31 studies deemed “high quality”. And here’s where the weakness of the underlying evidence is made clear. There appear few if any papers based on studies that actually blinded patients. Many are studying the “adjunctive” or add-on addition of CAM such as acupuncture to usual care, and concluding that interventions are either cost-saving or offer good value for money. Some of the studies included are economic models only — not based on actual data collected in trials, but utilizing simulated models to estimate costs and effects. And some of the studies deemed CAM don’t appear to be CAM at all- one paper estimates cost-effectiveness of a clinical trial that would evaluate vitamin K1 versus alendronate for fracture prevention: neither an evaluation of CAM nor an evaluation of its underlying cost-effectiveness. Another models outlines the cost-savings of Tai Chi programs in a nursing home population, based on the premise that tai chi demonstrably reduces the risk of falls. Looking at the manipulative treatments, some were delivered by chiropractors, and others by physiotherapists or general practitioners. Given the variety of treatments and approaches included in the final list, it’s not possible to draw any conclusions about the individual or overall effectiveness of CAM. But that doesn’t stop the authors from trying:

There are several implications of this study for policy makers, clinicians and future researchers. First, there is a large and growing literature of quality economic evaluations in CIM. However, although indexing is improving in databases, finding these studies can require going beyond simple CIM-related search terms. Second, the results of the higher-quality studies indicate a number of highly cost-effective, and even cost saving, CIM therapies. Almost 30% of the 56 cost-effectiveness, cost-utility and cost-benefit comparisons shown in table 4 (18% of the CUA comparisons) were cost saving. Compare this to 9% of 1433 CUA comparisons found to be cost saving in a large review of economic evaluations across all medicine.

Here the authors make a direct comparison between CAM and conventional medicine, implying that CAM may save more than medicine — a comparison that can’t be substantiated based on the multiple weaknesses in the results that report. Contrast their finding with that of White and Ernst, in their 2000 systematic review of CAM economic analyses, make a more cautious and pragmatic conclusion about the economic attractiveness of CAM:

Spinal manipulative therapy for back pain may offer cost savings to society, but it does not save money for the purchaser. There is a paucity of rigorous studies that could provide conclusive evidence of differences in costs and outcomes between other complementary therapies and orthodox medicine. The evidence from methodologically flawed studies is contradicted by more rigorous studies, and there is a need for high quality investigations of the costs and benefits of complementary medicine.

White and Ernst’s conclusion is equally relevant to the current systematic review. When it comes to evaluating cost-effectiveness, we first need to establish that a treatment is actually effective. Without this, there’s no point in proceeding to looking at costs. The authors mention this indirectly — but not to determine if these interventions actually work, but to validate the results (and seemingly the conclusions) that have already been drawn about their effectiveness:

Given the substantial number of economic evaluations of CIM found in this comprehensive review, even though it can always be said that more studies are needed, what is actually needed are better-quality studies—both in terms of better study quality (to increase the validity of the results for its targeted population and setting) and better transferability (to increase the usefulness of these results to other decision makers in other settings).

Conclusion: CAM is not cost effective

The highest quality evidence, and most rigorous clinical trials, have failed to identify complementary and alternative medicine interventions that offer meaningful clinical benefits. Lower quality trials are more likely to report positive findings, and economic analyses based on these studies should be expected to be biased towards findings of cost-effectiveness. Economic analyses that conclude treatments are cost effective are more likely to be published. The result is an economic house of cards. Given the variety of treatments, models and endpoints, systematic reviews are not useful tools to evaluate the overall cost-effectiveness of a disparate group of treatments. However, they may be useful in summarizing the types of studies that exist. Given the results of this systematic review, there is good evidence to suggest that economic analyses of CAM interventions do not generate useful or accurate estimates of cost-effectiveness.

Reference

Herman, P.M., Poindexter, B.L., Witt, C.M. & Eisenberg, D.M. (2012). Are complementary therapies and integrative care cost-effective? A systematic review of economic evaluations, BMJ Open, 2 (5) e001046. DOI: 10.1136/bmjopen-2012-001046