There are times when the best-laid blogging plans of mice and men often go awry, and this isn’t always a bad thing. As the day on which so many Americans indulge in mass consumption of tryptophan-laden meat in order to give thanks approached, I had tentatively planned on doing an update on Stanislaw Burzynski, given that he appears to have slithered away from justice yet again. Then what to my wondering eyes should appear in my e-mail in box but news of a study that practically grabbed me by my collars, shook me, and demanded that I blog about it. As if to emphasize the point, suddenly e-mails started appearing by people who had seen stories about the study and, for reasons that I still can’t figure out after all these years, were interested on my take on the study. Yes, I realize that I’m a breast cancer surgeon and therefore considered an expert on the topic of the study, mammography. I also realize that I’ve written about it a few times before. Even so, it never ceases to amaze me, even after all these years, that anyone gives a rodential posterior about what I think. Then I started getting a couple of e-mails from people at work, and I knew that Burzynski had to wait or that he would be relegated to my not-so-secret other blog (I haven’t decided yet).

As is my usual habit, I’ll set the study up by citing how it’s being spun in the press. My local home town paper seems as good a place to begin as any, even though the story was reprinted from USA Today. The title of its coverage was Many women receiving unnecessary breast cancer treatment, study shows, with the article released the day before the study came out in the New England Journal of Medicine:

Up to 70,000 American women a year are treated unnecessarily for breast cancer because they were screened with mammograms, according to an analysis in today’s “New England Journal of Medicine” that’s likely to reignite a running debate over the value of cancer screening.

The study, whose results are already being challenged by other cancer experts, finds that nearly one in three breast cancer patients – or 1.3 million women over the past three decades – have been treated for tumors that, although detectable with mammograms, would never have actually threatened their lives.

The study lays bare perhaps the greatest risk of cancer screening, called “overdiagnosis,” long acknowledged by doctors and even advocates of mammograms, but unknown to most women who undergo the procedures.

That’s actually a fairly sober assessment, as was this one by the LA Times:

About a third of all tumors discovered in routine mammography screenings are unlikely to result in illness, according to a new study that says 30 years of the breast cancer exams have resulted in the overdiagnosis of 1.3 million American women.

The report, published Thursday in the New England Journal of Medicine, argues that the increase in breast cancer survival rates over the last few decades is due mostly to improved therapies and not screenings, which are intended to flag tumors when they are small and most susceptible to treatment. Instead, the widespread use of mammograms now results in the overdiagnosis of breast cancer in roughly 70,000 patients each year, needlessly exposing those women to the cost and trauma of treatment, the authors wrote.

My first thought was: Here we go again. My second thought was: Wow. The result that one in three mammographically detected breast cancers might be overdiagnosed is eerily consistent with a study published three years ago that looked at mammography screening programs from locations as varied as the United Kingdom, Canada, Australia, Sweden, and Norway, which I discussed at the time it was released. The consistency could mean either convergence on a “true” estimate of overdiagnosis, or it might mean that both studies shared a bias, incorrect assumption, or methodological flaw. If they do, I couldn’t find it, but it’s still an intriguing similarity. In any case, let’s dig in. The article is by Archie Bleyer and H. Gilbert Welch (the latter of whom has featured prominently in this blog several times before) and is entitled Effect of Three Decades of Screening Mammography on Breast-Cancer Incidence.

Overdiagnosis and overtreatment in diseases we screen for

Before I go on I feel obligated to point out, as I always do when this subject comes up, that what I am referring to here are breast cancers detected by screening mammography in asymptomatic women. I cannot emphasize this enough. This study (and most of the studies I blogged about above) do not apply to women who have detected a lump, suspicious skin change, or any other symptoms of breast cancer. For such women, as far as we’ve been able to ascertain, the likelihood of overdiagnosis is vanishingly small. These cancers will almost certainly progress. Therefore, this study and my post do not apply to these cancers. If you feel a lump in your breast, get it checked out. If it is cancer, it will not fail to progress, nor will it go away. Again, I cannot emphasize this enough.

Regular readers are, I hope, familiar with the concept of overdiagnosis. After all, both Harriet Hall and I have written a fair amount about it before; so I’ll hope those who’ve read our discussions will bear with me a moment as I review the concept for those who might not be familiar with the concept. In doing so, I’ll steal shamelessly from my previous writings, after pointing out that it four and a half years ago when I first pointed out that the relationship between early detection of cancer—any cancer—and improved survival is more complicated than most people, even most doctors (including oncologists), think.

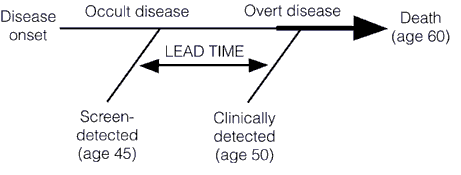

Screening for a disease involves subjecting an asymptomatic population, the vast majority of whom don’t have the disease, to a diagnostic test in order to find the disease before it causes symptoms. This is very different from most diagnostic tests used in medicine, the vast majority of which are ordered for specific indications. Any test to be contemplated for screening thus has to meet a very stringent set of requirements. First, it must be safe. Remember, we’re subjecting asymptomatic patients to a test, and invasive tests are rarely going to be worthwhile, except under uncommon circumstances (colonoscopy screening for colon cancer comes to mind). Second, the disease being screened for must be a disease that is curable or manageable. It makes little sense to screen for a disease like amyotropic lateral sclerosis (Lou Gehrig’s disease) because there is very little that will slow its progression. The one drug that we do have, Riluzole, only slows the progression of ALS slightly. Thus, diagnosing ALS a few months or a few years before symptoms appear won’t change the ultimate outcome. A very important corollary to this principle is that acting to treat or manage a disease early, before symptoms appear, should result in a better outcome, and the authors of this study point this out. I’ve discussed the phenomenon of lead time bias in depth before; it’s a phenomenon in which earlier diagnosis only makes it seem that survival is longer because a disease was caught earlier when in reality treatment had no effect on it. The condition progresses at the same rate as it would without treatment. Lead time bias is such an important concept that I’m going to republish the diagram I last used to explain it, because in this case a picture is worth a thousand words:

Then there’s this graph, from this paper, that demonstrates how screening preferentially detects less aggressive cancers (more details in my post here):

Another requirement for a screening test is that the disease being screened for must be relatively common. Screening for rare diseases is simply not practical from an economic standpoint. Economics aside, it’s bad medicine, too, because vast majority of “positive” test results will be false positives. Another way of saying this is that the specificity of the test must be such that, whatever the prevalence of the disease in the population, it does not produce too many false positives. In other words, for less common diseases the specificity and positive predictive value must be very high (i.e., a “positive” test result must have a very high probability of representing a true positive in which the patient actually does have the disease being tested for and the false negative rate can’t be too high; either that, or screeners must be prepared to do a lot of confirmatory testing for a large number of false positives). For more common diseases, a lower positive predictive value is tolerable. The test must also be sufficiently sensitive that it doesn’t produce too many false negatives. Remember, one potential drawback of a screening program is a false sense of security in patients who have been screened, a drawback that will be increased if a test misses too many patients with disease. Finally, a screening test must also be relatively inexpensive.

So what is overdiagnosis? Stated simply, overdiagnosis is a term used to describe disease detected in asymptomatic individuals that would never go on to threaten the life or health of those individuals. Many people subscribe to a simplistic view of cancer in which after the initial transforming event occurs to create a cancer cell the cancer will inevitably progress over time until, if left untreated, it will kill the individual. In such a view, detecting cancer earlier is an unalloyed good because it is assumed that, if left untreated, such tiny cancers will inevitably progress. However, as I first started to point out a few years ago, this is not necessarily true. people have a lot of cancer in them that never causes a problem. For instance, it’s well known that autopsy series reported on who died at age 80 or older have consistently shown that the majority of men over 80 (60-80%) have detectable foci of prostate cancer. Yet, obviously prostate cancer didn’t kill the men in these autopsy series, and they lived long enough to die either of old age or a cause other than prostate cancer. In other words, they died with early stage cancer but not of that cancer. Similarly, thyroid cancer is pretty uncommon (although not rare) among cancers, with a prevalence of around 0.1% for clinically apparent cancer in adults between ages 50 and 70. Finnish investigators performed an autopsy study in which they sliced the thyroids at 2.5 mm intervals and found at least one papillary thyroid cancer in 36% of Finnish adults. Doing some calculations, they estimated that, if they were to decrease the width of the “slices,” at a certain point they could “find” papillary cancer in nearly 100% of people between 50-70.

The list of conditions and diseases for which screening has increased apparent incidence goes on and on far beyond cancer.

One might ask what the problem is with overdiagnosis; i.e., what’s the harm? The harm arises from the consequence of overdiagnosis, which is overtreatment. If the screen-detected condition, be it tumor or other medical condition, would never progress to harm the patient even if untreated, then “treating” that condition can only cause harm, not benefit. That’s not even counting the risks from additional followup studies that are frequently required when a screening test finds an abnormality. In the case of breast cancer, the subject of the current study, those additional tests might mean more images (and the extra radiation they involve), invasive needle biopsies, and even surgical biopsies. In the case of screening for lung cancer, biopsies are more risky than for breast cancer, given the large blood vessels in the lung and the ease with which the a pneumothorax can occur. All mass screening programs involve a tradeoff, a balancing of risks and potential benefits, and it’s not even always clear how great the benefits might be. For instance, lead time bias can lead to an overestimation of benefits of treatment and a huge apparent increase in survival even if treatments don’t have an effect on the disease. That’s why mortality rates are the appropriate metric, not five-year survival rates, when evaluating a disease for which lead time bias is a consideration. It’s also why, when we do use survival rates in cancer, in order for them to be comparable they have to be stage-adjusted.

On to the study

In order to understand Welch’s study, it is important to realize that there is a huge assumption underlying its methods. That assumption, although reasonable, is not unassailable (more on that later). Specifically, as Welch states in the very first paragraph:

There are two prerequisites for screening to reduce the rate of death from cancer.1,2 First, screening must advance the time of diagnosis of cancers that are destined to cause death. Second, early treatment of these cancers must confer some advantage over treatment at clinical presentation. Screening programs that meet the first prerequisite will have a predictable effect on the stage-specific incidence of cancer. As the time of diagnosis is advanced, more cancers will be detected at an early stage and the incidence of early-stage cancer will increase. If the time of diagnosis of cancers that will progress to a late stage is advanced, then fewer cancers will be present at a late stage and the incidence of late-stage cancer will decrease.3

In other words, a screening test that does not overdiagnose will find cancers earlier. These cancers will be successfully (or at least more successfully) treated, and thus pulled out of the pool of early stage cancers that are progressing to late stage cancers. This removal of early stage cancers from the total pool of cancer diagnoses should thus shift the stage distribution of breast cancer to earlier stages and result in an absolute decrease in the number of late stage cancers being diagnosed. In other words, as the number of cancers being detected early goes up, the number of cancers being detected at advanced stages, particularly at stage IV (which can be treated but not cured), should go down. Thus, the overall hypothesis is that, if mammography is effective, there should be an increase in the incidence of early stage cancer diagnosed and a corresponding decrease in the incidence of late stage cancer. If the diagnosis of early stage cancer increased faster than the diagnosis of late stage cancer decreased that likely represents overdiagnosis.

To test this hypothesis and try to estimate the amount of overdiagnosis going on, Welch used the Surveillance, Epidemiology, and End Results (SEER) database to examine stage–specific incidence of breast cancer over time. This database covers approximately 10% of the U.S. population. Using such a database was complicated by two issues. First, the authors had to choose a baseline, more specifically, a time period that they set as their baseline breast cancer incidence for cancers detected without mammography. For various reasons, including an underestimate in the earliest years of the SEER database in the early 1970s and because of a brief spike in breast cancer diagnoses in the wake of Betty Ford’s diagnosis, the authors chose 1976 to 1978 as this baseline. Second, there was a period from the late 1980s to early 2000s when hormone replacement therapy with mixed estrogen-progesterone combinations was popular and is widely believed to have increased the incidence of breast cancer. This time period, according to the authors, ended in 2006. To correct for this in current estimates of breast cancer incidence from 2006 to 2008, they truncated the observed incidence from 1990 to 2005 to remove “excess” cases from previous years. This part of the approach actually puzzled me a bit, because although it would result in a lower apparent incidence of breast cancer it’s not clear how it would do what the authors stated and “provide estimates that were clearly biased in favor of screening mammography — ones that would minimize the surplus diagnoses of early-stage cancer and maximize the deficit of diagnoses of late-stage cancer”; i.e., provide a “best case scenario” for screening mammography.

Another area where I quibble a bit with Welch is his assumption that the change in the incidence rate of breast cancer among women under 40 (who do not undergo screening in this country) is a valid estimate of the true incidence of breast cancer. Breast cancer in women under the age of 40 is arguably biologically different than the more “typical” breast cancer. At the very least, it tends to be more aggressive. I realize that in the SEER database there is no better surrogate for the incidence of breast cancer in an unscreened population, but the question of the biological relevance of this “control” group was pretty much glossed over in this paper.

The first “money” figure is Figure 1, which shows the incidence of early and late stage cancer over time:

There are two things to note here. First, as I’ve discussed before on multiple occasions, the apparent incidence of early stage breast cancer skyrocketed after the introduction of mass mammography screening programs in the late 1970s and early 1980s and has only leveled off in the last six or seven years. Second, the incidence of cancers diagnosed at late stage did decrease, but not by a lot. The authors state:

The large increase in cases of early-stage cancer (from 112 to 234 cancers per 100,000 women — an absolute increase of 122 cancers per 100,000) reflects both detection of more cases of localized disease and the advent of the detection of DCIS (which was virtually not detected before mammography was available). The smaller decrease in cases of late-stage cancer (from 102 to 94 cases per 100,000 women — an absolute decrease of 8 cases per 100,000 women) largely reflects detection of fewer cases of regional disease. If a constant underlying disease burden is assumed, only 8 of the 122 additional early diagnoses were destined to progress to advanced disease, implying a detection of 114 excess cases per 100,000 women. Table 1 also shows the estimated number of women affected by these changes (after removal of the transient excess cases associated with hormone-replacement therapy). These estimates are shown in terms of both the surplus in diagnoses of early-stage breast cancers and the reduction in diagnoses of late-stage breast cancers — again, under the assumption of a constant underlying disease burden.

I’ve discussed how mammography has increased the apparent incidence of DCIS before. Ironically enough, I did it in the context of refuting a common antivaccine trope that “increased detection” and increased awareness couldn’t possibly result in an increase in the number of autism diagnoses. The observation that increased screening for virtually any condition can and does reliable result in the detection of preclinical disease and an increase in incidence is perhaps nowhere better shown that for the case of mammography and DCIS.

The second “money figure” (in this case, “money table”) from the paper is Table 2, which looks at the excess estimation of breast cancers by screening mammography based on four assumptions a base case (constant “underlying” incidence of breast cancer); best guess (breast cancer incidence rising at 0.25% per year, as estimated from the rate of increase of breast cancer incidence in women under 40); an extreme assumption (breast cancer incidence increasing 0.5% per year, twice that of the “best guess”) and a “very extreme assumption” (0.5% per year increase in breast cancer incidence plus using the highest estimate of baseline incidence of late stage disease). Here’s the table:

The authors point out that, regardless of the method they used to calculate it, their estimate of the number of women overdiagnosed with breast cancer over the the last 30 years was at least 1 million, and the proportion of cancers that were overdiagnosed were 31%, 26%, and 22% in the best guess, extreme, and very extreme estimates, respectively.

Although Welch’s estimate is consistent with a growing literature that is consistent with a rate of overdiagnosis for screen-detected breast cancers of over 20%, there are still problems with it. Most of these problems derive from using SEER data. For one thing, SEER is often slow to incorporate new clinical and scientific findings. For example, a few years ago I wanted estimates of the number of patients in our SEER catchment area who are HER2-positive. I couldn’t get it, because at the time SEER hadn’t yet incorporated HER2 status into its database, even though it had been used as a prognostic marker for several years before. SEER does now incorporate HER2, but the point is simply that it’s often behind the times because it’s a big database and decisions regarding how to change in response to evidence and new clinical findings often come slowly.

Another issue is how stage definitions change over the years. However, perhaps one thing that I might have expected someone like Welch to consider but that he apparently did not (at least not if his discussion is any indication) is the issue of stage migration. Welch defines his stages thusly:

The four stages in this system are the following: in situ disease; localized disease, defined as invasive cancer that is confined to the organ of disease origin; regional disease, defined as disease that extends outside of and adjacent to or contiguous with the organ of disease origin (in breast cancer, most regional disease indicates nodal involvement, not direct extension9); and distant disease, defined as metastasis to organs that are not adjacent to the organ of disease origin. We restricted in situ cancers to ductal carcinoma in situ (DCIS), specifically excluding lobular carcinoma in situ, as done in other studies.10 We defined early-stage cancer as DCIS or localized disease, and late-stage cancer as regional or distant disease.

Fair enough, as far as it goes. However, when one looks at breast cancer over 30 years, one can’t help but note that how we detect node-positive disease (i.e., regional disease) has changed. In fact, the new standard of care, known as sentinel lymph node biopsy, can detect lymph nodes with much smaller tumor burdens, particularly when immunohistochemical stains are used, which could mean that over the last decade or so we could be seeing stage migration, largely as a result of detecting smaller metastases. Stage migration is a phenomenon that occurs when more sophisticated imaging studies or more aggressive surgery leads to the detection of tumor spread that wouldn’t have been noted in an identical patient using previously used tests. What in essence happens is that technology results in a migration of patients from one stage to another. This is not an insignificant consideration. One study suggested that the stage migration rate was as high as one in four; i.e., 40% of patients having “positive” axillary lymph nodes with SLN biopsy compared to 30% having positive nodes using axillary dissection. Another study reported similar results. How this would affect Welch’s analysis is hard to tell, and correcting for it is probably not possible using the SEER database, particularly given that the extent of “up-staging” is not fully known yet. Be that as it may, an increase in the apparent incidence of patients with positive lymph nodes would increase the apparent incidence of advanced disease and decrease any decline in the incidence of advanced disease. How large this effect is, I don’t know, but it would suggest that the rate of overdiagnosis is lower than what Welch estimates. How much lower, or whether stage migration is even a significant factor, I don’t know, but I wish that Welch had at least mentioned it.

The bottom line

This latest study by Bleyer and Welch is not, as I’m sure some proponents of “alternative” therapies and “alternative” means of diagnosing breast cancer, such as thermography, will try to argue, evidence that mammography doesn’t work or that it doesn’t save lives. It does. Randomized controlled trials have demonstrated that. However, those trials are old, and it’s not infrequent that “real world” applications of medical tests and treatments will be less effective than demonstrated in RCTs. There is also the “decline effect” to contend with, and I sometimes wonder whether the re-evaluation of mammography that is going on right now is simply one more example of a test not being as good as RCTs originally suggested. On the other hand, there are studies (for instance, this one) that strongly suggest mammography’s value in saving lives, even in younger women (aged 40-49), for whom there is the most controversy regarding mammographic screening.

Be that as it may, the Bleyer and Welch study is simply more evidence that the balance of risks and harms from mammography is far more complex than perhaps we have appreciated before. It’s very hard for people, even physicians, to accept that not all cancers need to be treated, and the simplicity of messaging needed to promote a public health initiative like mammography can sometimes lead advocacy groups astray from a strictly scientific standpoint. One has only to look at the reactions of some doctors to see this. For instance:

The study was roundly criticized by radiologists who specialize in breast imaging, who questioned its methodology and the suggestion that some cancer-like growths should be ignored.

“It’s kind of unbelievable that they’re telling us we’re finding too many early-stage cancers,” said Dr. Stamatia Destounis, a breast imager in Rochester, N.Y. “Isn’t that the point?”

And:

“This is simply malicious nonsense,” said Dr. Daniel Kopans, a senior breast imager at Massachusetts General Hospital in Boston. “It is time to stop blaming mammography screening for over-diagnosis and over-treatment in an effort to deny women access to screening.”

Dr. Kopan, I’m afraid, is completely wrong. This study is not “malicious nonsense.” It has weaknesses and might well overestimate the rate of overdiagnosis, but overdiagnosis is a real phenomenon. Only someone utterly ignorant of basic cancer biology or (as I suspect) protecting his turf would say something so nonsensical (word choice intentional). We’ve seen him say these sorts of things before, most prominently in response to the USPSTF guidelines proposed three years ago when he made dire warnings that large number of women will die because of them and said of the members of the task force, “I hate to say it, it’s an ego thing. These people are willing to let women die based on the fact that they don’t think there’s a benefit.”

As I said, it’s hard for many physicians to accept that not all cancer necessarily needs treatment. Certainly this is likely to be true for ductal carcinoma in situ (DCIS), which consists of cancerous cells that have not yet invaded through the basement membrane of the ducts. Unfortunately, this is the predominant form of breast cancer that is detected by mammography. Indeed, the authors even point out that their method didn’t allow them to disentangle the incidence of DCIS from that of invasive breast cancer, thanks to the way that the SEER database is set up. The problem, of course, is that we don’t know how to predict which cancers will progress and which cancers will not.

Finally, for all the confusion this study causes, there is one spot of good news, and that’s the observation that much of the decline in breast cancer mortality over the last 20 years—yes, contrary to what you might have heard, breast cancer mortality has actually been steadily decreasing—is likely due to improvements in treatment. The authors point this out:

Whereas the decrease in the rate of death from breast cancer was 28% among women 40 years of age or older, the concurrent rate decrease was 42% among women younger than 40 years of age.6 In other words, there was a larger relative reduction in mortality among women who were not exposed to screening mammography than among those who were exposed. We are left to conclude, as others have,17,18 that the good news in breast cancer — decreasing mortality — must largely be the result of improved treatment, not screening. Ironically, improvements in treatment tend to deteriorate the benefit of screening. As treatment of clinically detected disease (detected by means other than screening) improves, the benefit of screening diminishes. For example, since pneumonia can be treated successfully, no one would suggest that we screen for pneumonia.

Ironically, it might be that one of the reasons that the mammography wars are heating up again, with concerns of overdiagnosis and overtreatment beginning to call the benefits of mammography into question might be that mammography is a victim of the success of breast cancer treatment. The multidisciplinary combination of surgery, chemotherapy and targeted therapies, and radiation oncology has made breast cancer treatment, even for relatively advanced cases, far more effective than it was 30 years ago. It might be that treatments for breast cancer have improved so much over the last couple of decades that the role of mammography will inevitably decline. Maybe.

There is, however, a lot more that needs to be done before we can conclude that; right now reports of the death of mammography are very premature. To me, what is most important in breast cancer screening right now is to develop reliable predictive tests that tell us which mammographically detected breast cancers an be safely observed and which ones are likely to threaten women’s lives. We are currently at a point where imaging technology has outpaced our understanding of breast cancer biology, or, as Dr. Welch put it, “Our ability to detect things is far ahead of our wisdom of knowing what they really mean.” Until our understanding of biology catches up, the dilemma of overdiagnosis will continue to complicate decisions based on breast cancer screening.