Nasal Spray Vaccine for SARS-CoV-2

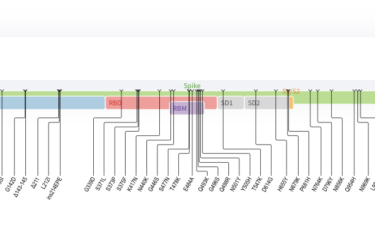

A nasal spray boost may enhance the effectiveness of vaccine defense against COVID.

Using AI Technology To Treat Mental Illness

AI will likely play a big role in treating mental illness in the future, but quack uses are also likely to proliferate.

Super Immunity vs Anti-Vaxxers

Anti-vaxxers continue to spread demonstrable misinformation, while the evidence for the benefits of COVID vaccines grows.

Breakthrough Heart Xenotransplantion from Pig

In a first, a bioengineered pig heart is transplanted into a human donor, indicating we are on the threshold of a game-changing option for organ transplantation.

COVID Vaccines and Cardiac Effects – Reality vs Lies

COVID mRNA vaccines only result in rare, mild, and transitory myocarditis, but this doesn't stop misinformation from spreading.

Radioactive 5G Pendants

Authorities had to warn the public not to use radioactive products to protect against harmless 5G.

The Need for Science-Based Medicine

The mission of SBM continues.

Treating the Unvaccinated

Should vaccination status be used to triage care for critically ill patients during a crisis?

Omicron

Omicron is the latest variant of concern. It's too early to know what this means, but there is reason for concern.

The Misinformation Dilemma

Is there a workable solution to the social media dilemma?