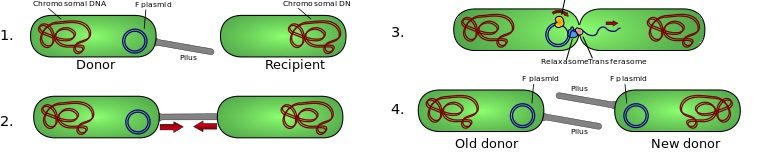

New Tools Against Antibiotic Resistance

Antibiotic resistance is a serious problem that may lead to a post-antibiotic era. However, there are potential solutions that deserve research priority.

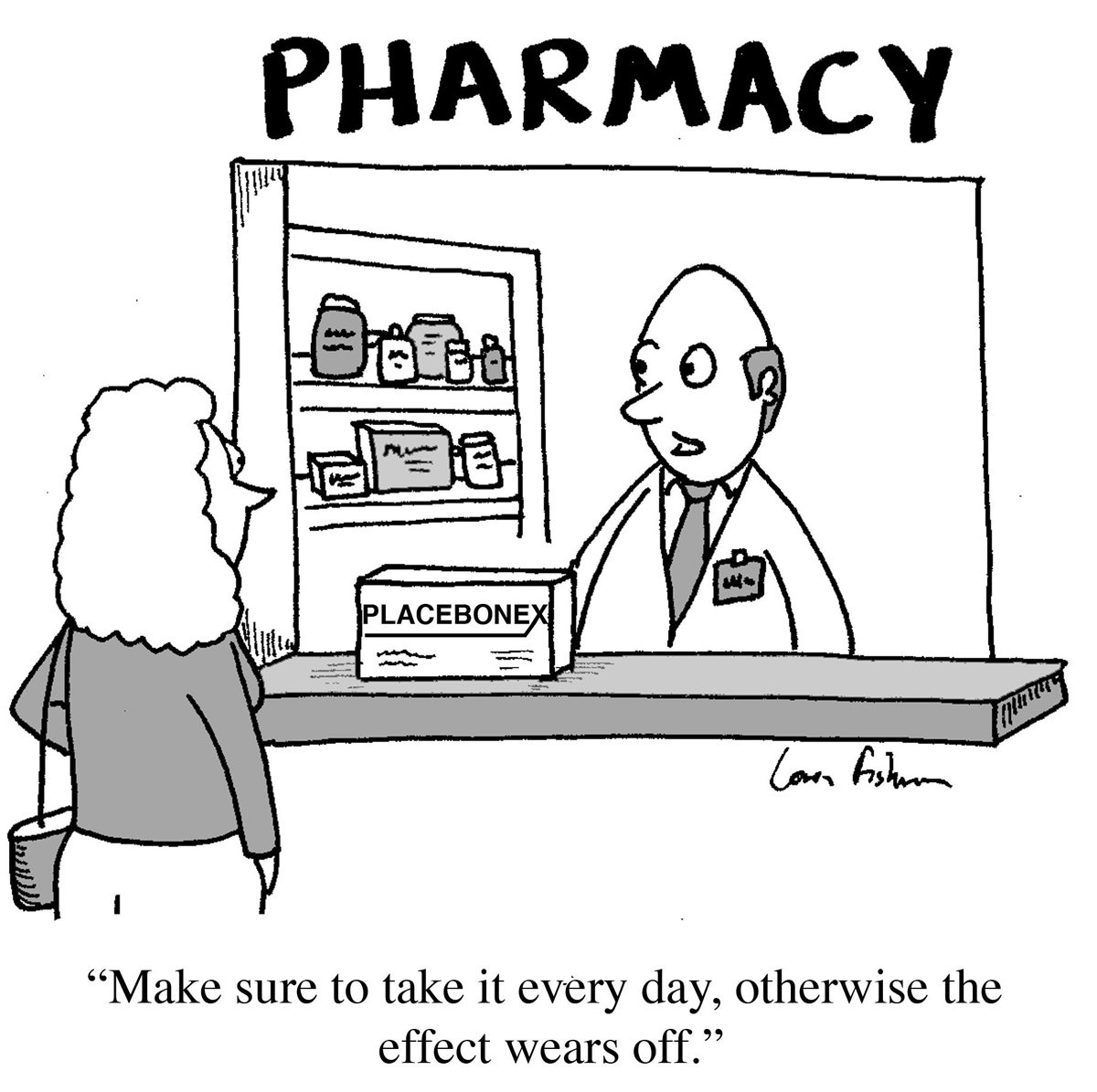

Placebo Myths Debunked

Placebo treatments are often sold as magical mind-over-matter healing effects, but they are mostly just illusions and non-specific effects.

Risks of a Gluten-Free Diet

Non-Celiac Gluten Sensitivity does not seem to be a real entity according the current evidence, but this has not stopped the gluten-free fad, which may be causing real harm.

ASEA – Still Selling Snake Oil

ASEAs marketing practices, in my opinion, are clearly deceptive. They use a lot of pseudoscientific claims representing the epitome of supplement industry misdirection and obfuscation. They use science as a marketing tool, not as a method for legitimately advancing our knowledge or answering questions about the efficacy of specific interventions.

Jarisch-Herxheimer and Lyme disease

When patients diagnosed with chronic Lyme are treated, no matter what happens as a response to the treatment is considered by believers to be evidence in support of the diagnosis. If they get better, then that is evidence that the treatment is working. If they get worse, then that is evidence that the treatment is working and they are experiencing the JHR...

Is Mindfulness Meditation Science-Based?

Existing research has not yet clearly defined what mindfulness is and what effect it has. The hype clearly has gone beyond the science, and more rigorous research is needed to determine what specific effects there are, if any.

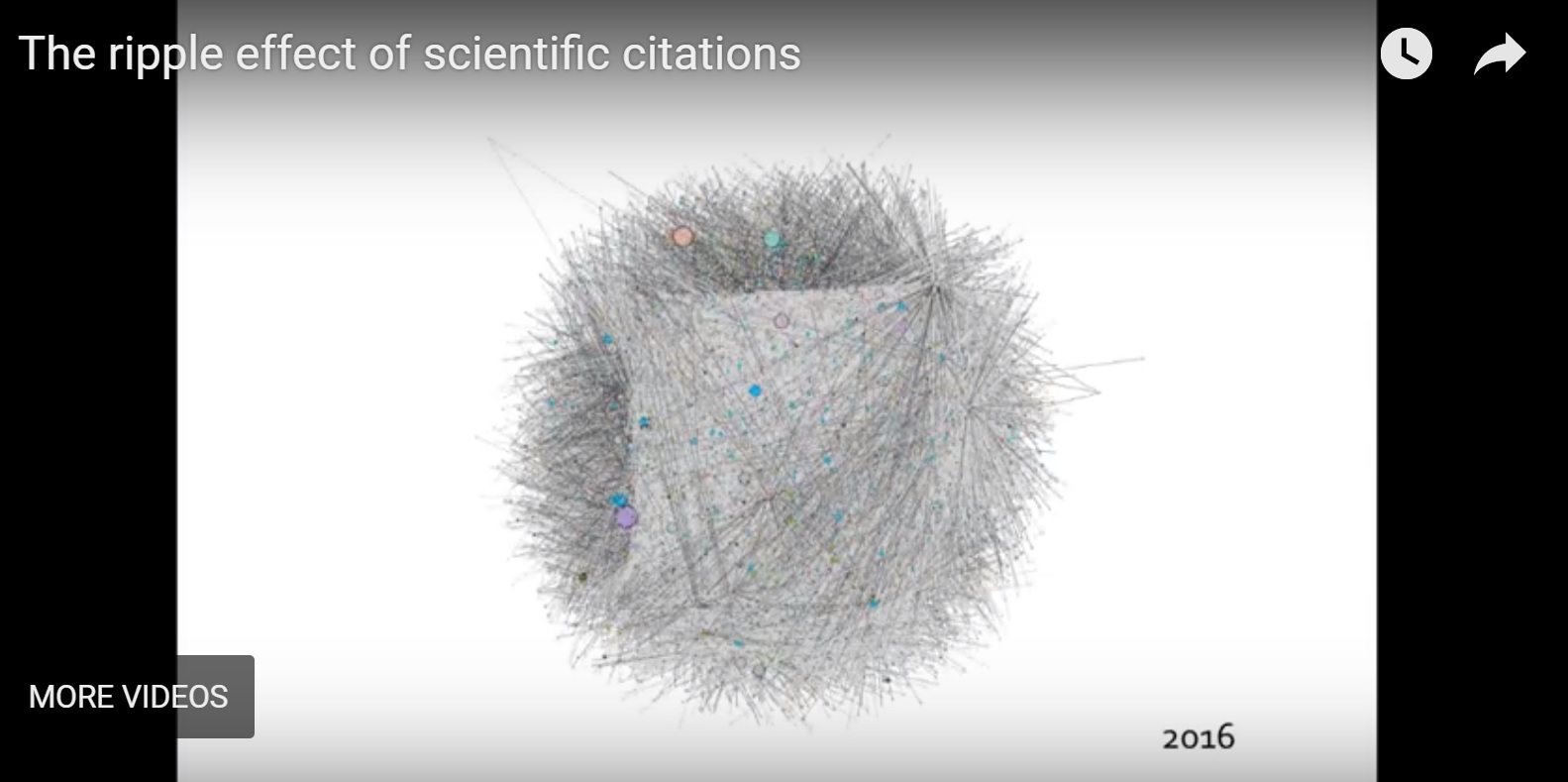

Zombie Science

Retractions of scientific studies do not always mean that the studies die a deserved death. Sometimes they live on as zombie studies, continuing to be cited by other researchers and having an effect on the scientific discussion. We can fix this.

More Integrative Propaganda

Defenders of integrative quackery attack proponents of science-based medicine for simply pointing out the scientific evidence and exposing their poor logic.

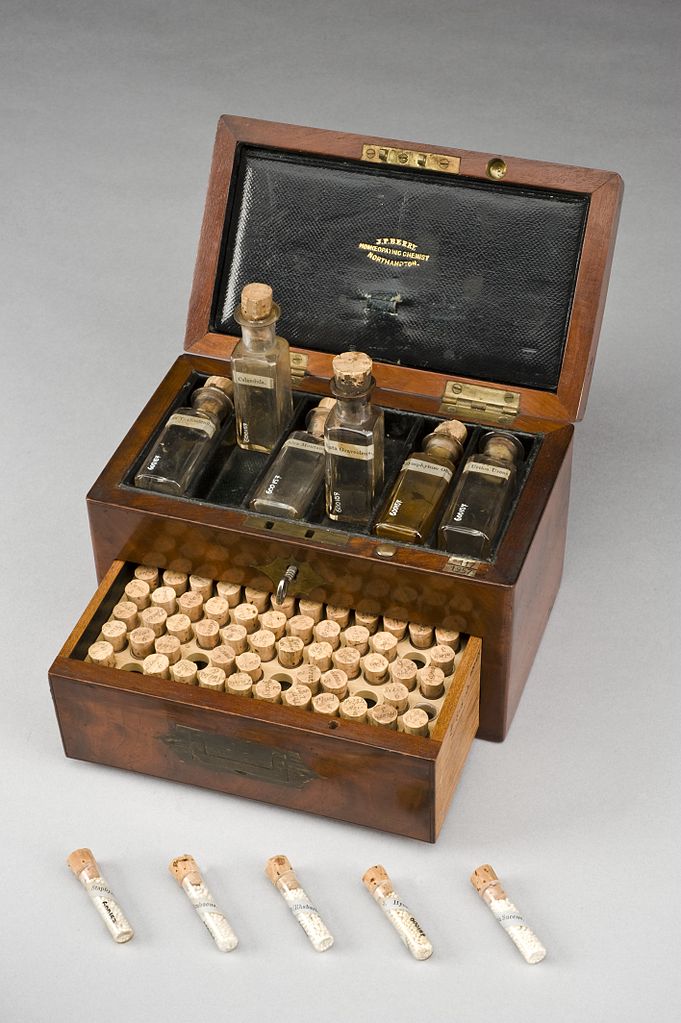

Homeopathy Embarrassing to Integrative Medicine

Homeopathy is the most embarrassing form of alternative medicine, and the easiest to refute. There has been long series of skeptical wins around the world over the past year - including University of California, Irvine's decision to scrub its mention from the homepage of its latest integrative medicine center. Hopefully, if we can keep up the pressure the trend will continue!

SBM Progress Report

Science-Based Medicine has been operating for a decade. While we have been successful by many measures, the challenges we face remain great. Here is a look at the mission of SBM, and a call for support to our readers.