Homeopathy Embarrassing to Integrative Medicine

Homeopathy is the most embarrassing form of alternative medicine, and the easiest to refute. There has been long series of skeptical wins around the world over the past year - including University of California, Irvine's decision to scrub its mention from the homepage of its latest integrative medicine center. Hopefully, if we can keep up the pressure the trend will continue!

TCM (Traditional Chinese Medicine): New Developments

Evidence for the efficacy of Traditional Chinese Medicine is scanty, unconvincing, and often fraudulent. China is seeing a resurgence of TCM, even teaching it to children. But in Australia, restrictions are being placed on misleading advertising.

Ty Bollinger’s “The Truth About Cancer” and the unethical marketing of the unproven cancer virotherapy Rigvir

Last week, I wrote about Rigvir, a "virotherapy" promoted by the International Virotherapy Center (IVC) in Latvia, which did not like what I had to say. When a representative called me to task for referring to the marketing of Rigvir using patient testimonials as irresponsbile, it prompted me to look at how Ty Bollinger's The Truth About Cancer series promoted Rigvir through...

Maximized Living: “5 Essentials” of Chiropractic Marketing Propaganda

What do vitalism, old school chiropractic subluxations, germ theory denial, detox supplements, marketing gimmicks, and practicing way beyond a reasonable scope have in common?

If you feel better, should you stop taking your antibiotics?

A recent paper suggests that patients would be better off stopping antibiotics when they feel better, instead of completing the entire amount prescribed. Could this approach reduce antibiotic overuse and the risk of widespread resistance?

Quackademic Medicine at UC Irvine

UC Irvine Medicine School opens a center for integrative medicine, selling out to promote quackery in medicine.

Flu Shots: Here We Go Again!

The many myths about flu shots continue to circulate and persuade some people not to get a flu shot. Flu shots are excellent insurance, safe and reasonably effective. Immunization protects not only the recipient but also vulnerable groups in the community.

Rigvir: Another unproven and dubious cancer therapy to be avoided

Recently, the Hope4Cancer Institute, a quack clinic in Mexico, has added a treatment known as Rigvir to its coffee enemas and other offerings. But what is Rigvir? It turns out that it's an import from Latvia with a mysterious history. Proponents claim that it is an oncolytic virus that targets cancer specifically and leaves normal cells alone. Unfortunately, there is a profound...

The influenza vaccine and miscarriages: Much ado about nothing

A study published on Wednesday claims to have found a link between influenza vaccination and miscarriage, and antivaxers are gloating. The study itself suffers mightily from post hoc subgroup analyses on small numbers, so much so that even its authors don’t really believe its results. None of that stopped them from publishing the study, thus justifying "more research" that will almost certainly...

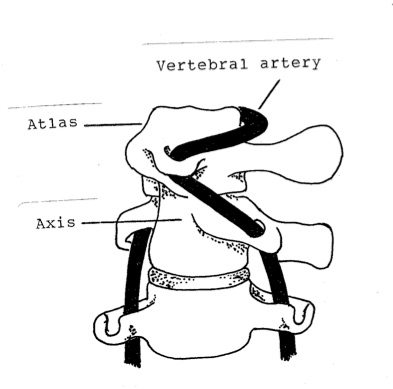

Study: patients should be warned of stroke risk before chiropractic neck manipulation

Another study adds to growing body of evidence that chiropractic neck manipulation is a risk factor for stroke. Patients should be warned of risk.

Don’t Blame the Patient