Tag: errors

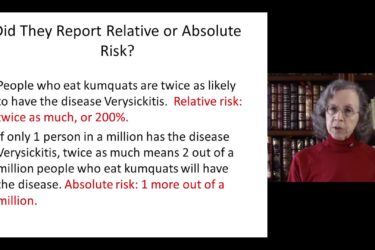

Incorrect Numbers

Having made them myself, I completely understand that doctors can make errors. However, I can't fathom why some of them don't seem to care about correcting them, especially considering the health of children is at stake.

Cognitive Traps

In my recent review of Peter Palmieri’s book Suffer the Children I said I would later try to cover some of the many other important issues he brings up. One of the themes in the book is the process of critical thinking and the various cognitive traps doctors fall into. I will address some of them here. This is not meant to...

Diagnosis, Therapy and Evidence

When Dr. Novella recently wrote about plausibility in science-based medicine, one of our most assiduous commenters, Daedalus2u, added a very important point. The data are always right, but the explanations may be wrong. The idea of treating ulcers with antibiotics was not incompatible with any of the data about ulcers; it was only incompatible with the idea that ulcers were caused by...

The Mythbusters of Psychology

Karl Popper said “Science must begin with myths and with the criticism of myths.” Popular psychology is a prolific source of myths. It has produced widely held beliefs that “everyone knows are true” but that are contradicted by psychological research. A new book does an excellent job of mythbusting: 50 Great Myths of Popular Psychology: Shattering Widespread Misconceptions about Human Behavior by...

Why We Need Science: “I saw it with my own eyes” Is Not Enough

I recently wrote an article for a community newspaper attempting to explain to scientifically naive readers why testimonial “evidence” is unreliable; unfortunately, they decided not to print it. I considered using it here, but I thought it was too elementary for this audience. I have changed my mind and I am offering it below (with apologies to the majority of our readers),...