Tag: evidence-based medicine

Fighting Against Evidence

For the past 17 years Edge magazine has put an interesting question to a group of people they consider to be smart public intellectuals. This year’s question is: What Scientific Idea is Ready for Retirement? Several of the answers display, in my opinion, a hostility toward science itself. Two in particular aim their sights at science in medicine, the first by Dean...

Philosophy Meets Medicine

Note: This was written as a book review for Skeptical Inquirer magazine and will be published in its Jan/Feb 2014 issue. ————– Medicine is chock-full of philosophy and doesn’t know it. Mario Bunge, a philosopher, physicist, and CSI (Center for Skeptical Inquiry) fellow, wants to bring philosophy and medicine together for mutual benefit. He has written a book full of insight and wisdom, Medical Philosophy:...

“Moneyball,” the 2012 election, and science- and evidence-based medicine

Regular readers of my other blog probably know that I’m into more than just science, skepticism, and promoting science-based medicine (SBM). I’m also into science fiction, computers, and baseball, not to mention politics (at least more than average). That’s why our recent election, coming as it did hot on the heels of the World Series in which my beloved Detroit Tigers utterly...

The Forerunners of EBM

The term “evidence-based medicine” first appeared in the medical literature in 1992. It quickly became popular and developed into a systematic enterprise. A book by Ulrich Tröhler To Improve the Evidence of Medicine: The 18th century British origins of a critical approach argues that its roots go back to the 1700s in Scotland and England. An e-mail correspondent recommended it to me....

“How do you feel about Evidence-Based Medicine?”

That was the question asked on a Medscape Connect discussion I did a double-take. How do you feel? Could anybody object to the idea of basing treatments on evidence? The doctor who started the discussion asked: Besides using EBM, a lot of my prescribing comes from anecdotal experience and intuition. How about you? Where do you get your information from that you...

What is Science?

Consider these statements: …there is an evidence base for biofield therapies. (citing the Cochrane Review of Touch Therapies) The larger issue is what constitutes “pseudoscience” and what information is worthy of dissemination to the public. Should the data from our well conducted, rigorous, randomized controlled trial [of ‘biofield healing’] be dismissed because the mechanisms are unknown or because some scientists do not...

On the “individualization” of treatments in “alternative medicine,” revisited

As I contemplated what I’d like to write about for the first post of 2012, I happened to come across a post by former regular and now occasional SBM contributor Peter Lipson entitled Another crack at medical cranks. In it, Dr. Lipson discusses one characteristic that allows medical cranks and quacks to attract patients, namely the ability to make patients feel wanted,...

Dummy Medicine, Dummy Doctors, and a Dummy Degree, Part 2.2: Harvard Medical School and the Curious Case of Ted Kaptchuk, OMD (cont. again)

“Strong Medicine”: Ted Kaptchuk and the Powerful Placebo At the beginning of the first edition of The Web that has no Weaver, published in 1983, author Ted Kaptchuk portended his eventual academic interest in the placebo: A story is told in China about a peasant who had worked as a maintenance man in a newly established Western missionary hospital. When he retired...

Exorcism and Sorcery as Health Benefits?!

Luis Fernando Verissimo, a Brazilian writer, once proposed “voodoopuncture”. Instead of going to the acupuncturist, you would be treated without leaving home. The voodoopuncturist would stick acupuncture needles in the voodoo dolls of you! I add that voodoopuncture could be outsourced to Haiti and/or China. It is a win-win-win situation! — Leonardo Monasteri, Brazilian economist As unbelievable as this might sound, “voodoopuncture”...

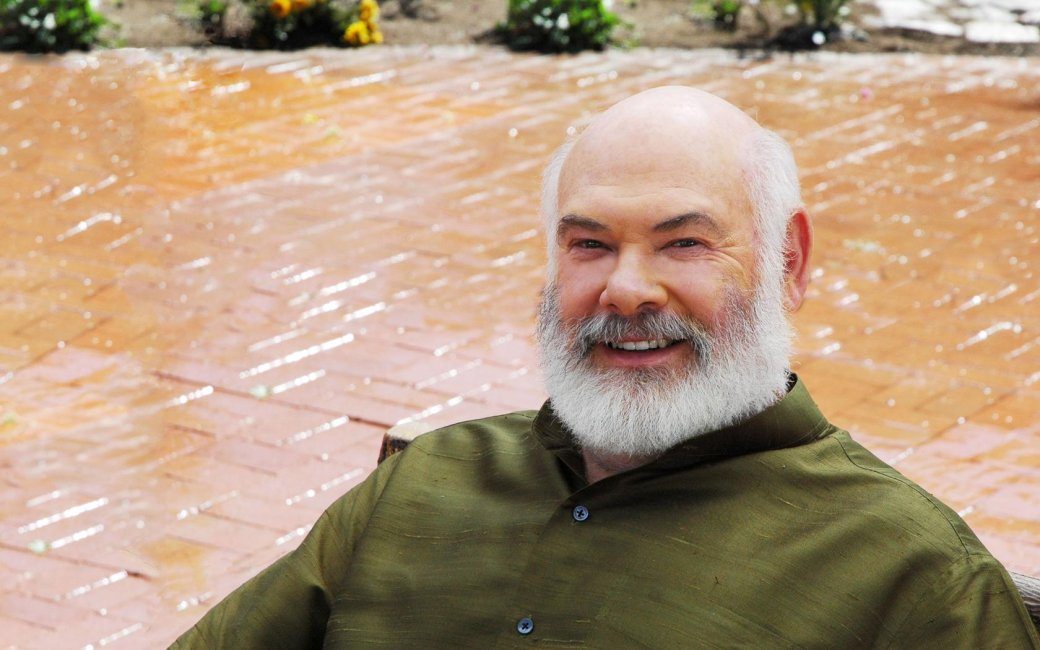

Surprise, surprise! Dr. Andrew Weil doesn’t like evidence-based medicine

Dr. Andrew Weil is a rock star in the “complementary and alternative medicine” (CAM) and “integrative medicine” (IM) movement. Indeed, it can be persuasively argued that he is one of its founders, at least a founder of the its most modern iteration, and I am hard-pressed to think of anyone who did more in the early days of the CAM/IM movement, back...