One issue that keeps coming up time and time again for me is the issue of screening for cancer. Because I’m primarily a breast cancer surgeon in my clinical life, that means mammography, although many of the same issues come up time and time again in discussions of using prostate-specific antigen (PSA) screening for prostate cancer. Over time, my position regarding how to screen and when to screen has vacillated—er, um, evolved, yeah, that’s it—in response to new evidence, although the core, including my conclusion that women should definitely be screened beginning at age 50 and that it’s probably also a good idea to begin at age 40 but less frequently during that decade, has never changed. What does change is how strongly I feel about screening before 50.

My changes in emphasis and conclusions regarding screening mammography derive from my reading of the latest scientific and clinical evidence, but it’s more than just evidence that is in play here. Mammography, perhaps more than screening for any disease, is affected by more than just science. Policies regarding mammographic screening are also based on value judgments, politics, and awareness and advocacy campaigns going back decades. To some extent, this is true of many common diseases (i.e., that whether and how to screen for them are about more than just science), but in breast cancer arguably these issues are more intense. Add to that the seemingly eternal conflict between science and medicine communication, in which a simple message, repeated over and over, is required to get through, versus the messy science that tells us that the benefits of mammography are confounded by issues such as lead time and length bias that make it difficult indeed to tell if mammography—or any screening test for cancer, for that matter—saves lives and, if it does, how many. Part of the problem is that mammography tends to detect preferentially the very tumors that are less likely to be deadly, and it’s not surprising that periodically what I like to call the “mammography wars” heat up. This is not a new issue, but rather a controversy that flares up periodically. Usually this is a good thing.

And these wars just just heated up a little bit again late last week.

Applying the heat were two Dartmouth Medical School faculty, whose criticisms of the “overselling” of mammography led to headlines like this on Thursday and Friday:

- Breast Cancer: Komen Oversells Mammograms, Doctors Say

- Breast cancer charity overstated screening benefits, researchers say

- Breast Cancer Screening: How Komen Oversold the Benefits of Mammography

- Susan G. Komen’s emphasis on mammograms during breast cancer awareness month called into question

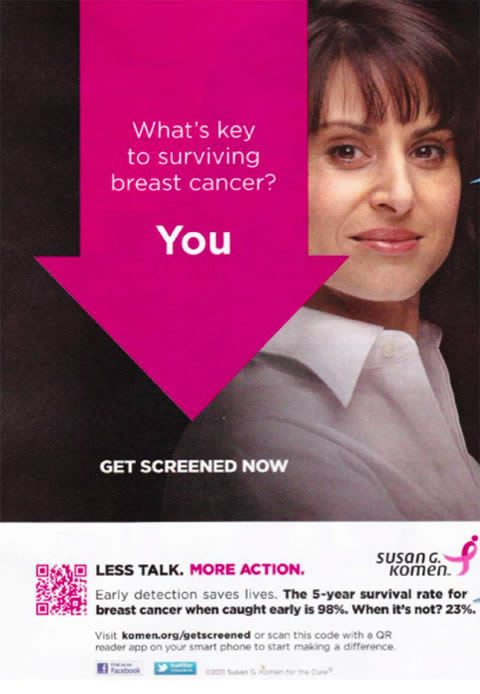

All of these news reports are based on an editorial published Friday in the BMJ by Steven Woloshin and Lisa Schwartz entitled Not So Stories: How a charity oversells mammography (PDF available). It tells the story of an advertisement used by the Komen for the Cure Foundation during last year’s Breast Cancer Awareness Month. It is an ad that is still present on the Komen website.

Before I take this on, I need to clearly state my conflict of interest here. Specifically, I have a professional relationship with our local Komen affiliate. Sometimes I have disagreed with Komen, in particular three years ago when, it seemed, I was the only person I knew who was receptive to the recommendations of the USPSTF regarding mammographic screening. Far more of the time, I have admired the work done by our local affiliate “on the ground,” so to speak and remained honored to know and work with so many dedicated people, who work year in and year out to raise money for breast cancer research, screening, and treatment. It is also why it pains me to take this issue on. However, I feel it is my obligation as the resident breast cancer expert here on Science-based Medicine to do so, particularly given the confusion this development is likely to cause.

Also, before I go on, however, as I always do, I want to make sure that we are very clear on what is being discussed here. We are discussing screening mammography; i.e., using mammography on asymptomatic women at regular intervals in order to detect breast cancer before it becomes symptomatic. We are not discussing diagnostic mammography, which is undertaken either in the case of a woman who feels a lump, in order to evaluate that lump or mass, or in women whose screening mammography reveals an abnormality. We are not discussing diagnostic mammography. We are discussing screening mammography. Once again, I can’t emphasize that distinction enough. Women with symptoms should have mammography and other imaging modalities, such as ultrasound and MRI, as medically indicated. I also have to emphasize yet again, that what we are talking about is screening in women at average risk for breast cancer, not women who are at elevated risk due to family history, genetic predisposition, or other factors. In these women, screening is more effective because their chances of developing breast cancer are elevated.

With those two important issues and clarifications out of the way, let’s dive in.

Using the wrong statistics? Unfortunately yes, but so do a lot of doctors.

The first thing I wondered about is why the authors picked on Komen when Komen is not the only breast cancer advocacy organization making claims for the benefits of mammography that might be a bit overblown. For instance, the American College of Radiology has a website Mammography Saves Lives, which uses arguments even worse than the argument in the specific Komen ad that Woloshin and Schwartz criticize do, as I will show later. Of course, ACR is not a breast cancer advocacy organization; rather it is, like the AMA, a trade organization that protects the interests of its constituents, namely radiologists, and it is in the ACR’s interest to keep mammographic screening programs going. Also, Komen is obviously the biggest kid on the block as far as breast cancer advocacy groups go and thus the biggest target as well. The National Breast Cancer Coalition, which Woloshin and Schwartz mention (see below), is actually not nearly as well known as Komen, and it also advocates the completely unrealistic goal of “eliminating” breast cancer by 2020. Its opinion is a minority opinion, at least with respect to screening.

In the meantime, let’s see what Woloshin and Schwartz’s criticism boils down to. They begin with a description of how Breast Cancer Awareness Month has become such a big deal over the last 20 years as a lead-in to this provocative observation:

Unfortunately, there is a big mismatch between the strength of evidence in support of screening and the strength of Komen’s advocacy for it. A growing and increasingly accepted body of evidence shows that although screening may reduce a woman’s chance of dying from breast cancer by a small amount, it also causes major harms.5 6 In fact, the benefits and harms are so evenly balanced that the National Breast Cancer Coalition, a major US network of patient and professional organisations, “believes there is insufficient evidence to recommend for or against universal mammography in any age group of women.”7 Even the chief medical officer of the American Cancer Society, which has long promoted screening, calls for balanced information to ensure that women understand the benefits and harms of mammography.8 Recently in the United Kingdom an independent panel began reviewing the evidence for mammography to help the NHS decide whether the balance of benefits and harms justifies its national screening programme.9

Over the last three or four years that I’ve been assessing newer studies of mammography, I sometimes wonder if this sort of “coming down to earth” by mammography is an example of the “decline effect,” namely the tendency in medicine for early results for any intervention to show much more efficacy and for that apparent efficacy to “decline” with time. For instance, early randomized clinical trials examining mammographic screening demonstrated significant reductions in breast cancer-specific mortality in the screened group, and it was on this basis that large population-based screening programs were implemented beginning around 30 to 35 years ago in the U.S. Now that we have some 20 to 30 years’ worth of followup data, we are now finding that perhaps in the real world mammography screening isn’t as effective as it once seemed in clinical trials. Even so, it probably still presents a 15% decrease in breast cancer mortality in screened populations, if one accepts this Cochrane Review estimate. That’s not too shabby. In fact, it’s much better than “not too shabby.” Think of it this way. If mammography really does achieve a 15% reduction in breast cancer mortality, that would potentially be roughly 6,000 lives saved per year given that there are around 40,000 deaths from breast cancer each year in the U.S. Having lost my mother-in-law to breast cancer three years ago, I can only imagine the amount of pain, both emotional and physical, such a number represents. So, while there is no doubt that mammography can save lives, the debate remains over how many and at what cost. Balancing these risks and benefits then becomes a question that relies on our values and how much we as a society are willing to pay to save one life. These are not questions that can be answered by science alone, but science must inform the answers.

Arguments over the efficacy of mammographic screening for reducing breast cancer-associated mortality in the real world, aside, here is the meat of the criticism of the ad Komen ad (pictured above):

The advertisement states that the key to surviving breast cancer is for women to get screened because “early detection saves lives. The 5-year survival rate for breast cancer when caught early is 98%. When it’s not? 23%.”

This benefit of mammography looks so big that it is hard to imagine why any woman would forgo screening. She’d have to be crazy.

But it’s the advertisement that is crazy. Why? Because screening changes the point during the course of cancer when a diagnosis is made. Without mammography screening, a diagnosis is made when the tumour can be felt. With screening, diagnosis is made years earlier when tumours are too small to feel. Five year survival is all about what happens from the time of diagnosis: it is the proportion of women who are alive five years after diagnosis. Because screening finds cancers earlier, comparing survival between screened and unscreened women is hopelessly biased.

I must also give props to Barnette Kramer for the best analogy ever to explain lead time bias to the lay public, an analogy cited by Woloshin and Schwartz:

Barnett Kramer, director of the National Cancer Institutes’ Division of Cancer Prevention, explained lead time bias by using an analogy to The Rocky and Bullwinkle Show, an old television cartoon popular in the US in the 1960s. In a recurring segment, Snidely Whiplash, a spoof on villains of the silent movie era, ties Nell Fenwick to the railroad tracks to extort money from her family. She will die when the train arrives. Kramer says, “Lead time bias is like giving Nell binoculars. She will see the train—be ‘diagnosed’—when it is much further away. She’ll live longer from diagnosis, but the train still hits her at exactly the same moment.”

In other words, for any screening test, it is very difficult to tell how much of the measured effect on five- or ten-year survival rates is due to binoculars or an actual effect on slowing down or stopping the train. I once again refer readers to previous posts that I’ve written on the issue. Add to this the tendency of mammography or other routine screening tests to detect preferentially slower-moving trains (or even trains that have stopped). That’s why mortality statistics, at least from a population standpoint, are more reliable. In terms of determining if a test is efficacious, randomized controlled trials are an excellent starting point, but it is mortality statistics that measure the “real world” effectiveness of the test.

Whether Komen realizes it or not, this is exactly the same fallacy that I described a while back in deconstructing a poorly done study that purported to demonstrate that the United States does a lot better than Europe in terms of diagnosing and treating cancer, all with the subtext that single-payer and government-funded universal health care programs don’t do as well as our supposed “free market” approach. The authors compared the survival data for cancer in several countries and found that survival in the U.S. was better. Not surprisingly, this difference in apparent survival was driven mostly by breast cancer and prostate cancer, the two cancers for which we in the U.S. screen more aggressively than is done in Europe. Also not surprisingly, when one looks at mortality data, one finds that there is very little difference between the U.S. and Europe and that some countries appear to be doing better than we are for certain cancers. In other words, when you look at five- and ten-year survival statistics, it looks as though the U.S. is doing much better, but when you look at mortality data, it’s a mixed bag, with the U.S. doing better for some cancers and not as well for others.

It is also a fallacy to which, unfortunately, many doctors (who really should know better) fall prey, as a recent survey of primary care physicians demonstrates. Basically, when polled about hypothetical scenarios about a new screening test, physicians were much more impressed by a reported increase in five year survival or increased detection than they were by a reported decrease in mortality. This survey indicates that many physicians do not understand the concepts of lead time and length bias. Indeed, many physicians who, even more than a family physician, should know better, don’t, for example, Dr. Deborah Rhodes. That is why I think that it is unlikely that Komen was intentionally trying to deceive or oversell mammography with this advertisement. Rather, I strongly suspect that this add came about from the same basic misunderstanding of the proper methodology to determine the utility of a screening test for cancer that large swaths of the medical field, including, unfortunately, oncologists and radiologists, also suffer from.

I also couldn’t find the source of the assertion that breast cancers discovered early is 98% while it is 23% when not discovered early. Presumably, the ad is comparing stage I disease versus stage IV disease and implying that mammographic screening is the difference between being diagnosed at stage I and being diagnosed at stage IV. As Woloshin and Schwartz correctly point out, the five year survival for early and late stage cancers tells nothing about the benefits of screening. In fact, it is known that tumors detected by mammography tend to have a better prognosis than those detected by other means (i.e., symptomes), but figuring out how much of that difference is “real” and how much is due to lead time bias is anything but simple. In actuality, the majority is probably lead time bias, although it is clear that there is some benefit due to screening.

Absolute versus relative benefit versus population benefit versus overdiagnosis

Another issue that demands clarity but often does not receive it that is illuminated by this latest blowup is the issue of absolute risk versus relative risk. When I refer to a 15-25% reduction in risk of breast cancer in populations screened using mammography, what, exactly does that mean? I’ve pointed out before in discussing screening by mammography that to avert one death from breast cancer with mammographic screening for women between the ages of 50-70 838 women need to be screened over 6 years for a total of 5,866 screening visits, to detect 18 invasive cancers and 6 instances of ductal carcinoma in situ (DCIS). In other words, mammographic screening is very labor- and resource-intensive, and a lot of women have to be screened to save one life.

Woloshin and Schwartz also point out that for women between the ages of 40 and 49, mammographic screening is associated with a reduction in risk of dying of breast cancer over 10 years from 0.35% to 0.30% (a relative risk reduction of 14%); between the ages of 50 and 59, from 0.53% to 0.46% (a relative risk reduction of 13%); and between the ages of 60 and 69, from 0.83% to 0.56% (a relative risk reduction of 33%). When examined on a relative basis, a risk reduction of 13 to 33% looks impressive. However, when examined on an absolute basis, these risk reductions sound a lot less impressive. It is generally a truism in medicine that, if you want to make the apparent benefit sound as good as possible, you use relative risk reductions but that if you want to make relative risk reductions sound as unimpressive as possible you use absolute risk reductions. That’s because absolute risk reduction incorporates the risk a person has of developing the condition being intervened against. This is the same issue that comes up when discussing improvements in five year survival brought about by chemotherapy in cancer. For instance, I can tell you that, if you have a stage I cancer, chemotherapy will improve your five year survival by 30%. That’s a relative number. However, if I cite it in terms of absolute risk reduction (rounding to make the numbers easy), it is a 3% absolute improvement in survival (from 90% to 93%). Personally, I believe in quoting both figures.

There’s another way to look at it, too. Let’s take a look at the 50-59 year old age group. In the most recent census, there were approximately 21,506,000 such women in the U.S. A decrease in the chance of dying of breast cancer of 0.07% translates into approximately 15,000 deaths over ten years, or approximately 1,500 deaths per year. The comparable figure for the 40-49 year age range is roughly 11,000 deaths averted over ten years; for the 60-69 year age range, 30,000 deaths over 10 years, using these figures. Note that these numbers add up to roughly 5,600 breast cancer deaths a year averted, which is pretty close to the original estimate I made above of 6,000, reassuring me that at least I’m in the ballpark. Obviously, these are are not inconsequential numbers. Each one is a woman’ life saved from dying of breast cancer. They are, however, aggregate estimates for the entire population, which makes them useful as a guide to policy estimating how many women can be saved but not particularly useful for a single woman trying to decide whether to undergo screening.

Finally, there is the issue of overdiagnosis. It is inherent in any screening test that it will pick up “subclinical disease.” The question in cancer is whether this subclinical disease will ever progress to endanger the life of the patient. In the case of breast cancer, as I’ve discussed on many occasions before, lead time bias and, in particular, length bias mean that mammography tends to detect lesions that are slower growing, some of which might be so slow growing that they would never become clinically apparent, much less endanger the life of the patient, within her lifespan. Part and parcel of this tendency is the vastly increased incidence of DCIS. Back in the early 1900s, DCIS was rare because by the time it grew large enough to be a palpable mass, it almost always had become invasive cancer. Now, thirty years or so after mass mammographic screening programs began, DCIS is common. Indeed, approximately 30-40% of breast cancer diagnoses are in fact DCIS. Indeed, a recent study found that DCIS incidence rose from 1.87 per 100,000 in the mid-1970s to 32.5 in 2004. That’s a more than 16-fold increase over 30 years, and it’s pretty much all due to the introduction of mammographic screening. Now, when it comes to DCIS, we don’t have a good handle on what percentage of DCIS will progress to invasive cancer, but we do know that a significant percentage will not. The problem, of course, is that there is no way to predict right now for any individual patient whether her DCIS will progress; so we treat them all the same. Similarly, a significant percentage of invasive cancer detected by mammography alone will not progress appreciably; yet they are all treated because we cannot tell which ones will and will not progress. In other words, over diagnosis leads to overtreatment. Unfortunately, consideration of all these issues is not easily compatible with the need for simple messages that motivate people to act.

I also can’t help but note that the ad above is jarringly out of sync with the science-based recommendations elsewhere on the Komen website. For instance, its screening recommendations for women at average risk are in line with the scientific consensus, recognizing the areas of controversy regarding screening women between ages 40 to 49, along with a handy-dandy table summarizing the mammography recommendations of the various cancer organizations about when and how often to screen. There’s also a statement from Dr. Eric Winer, Komen’s scientific advisor, that is about as reasonable and middle-of-the-road as you could ask for:

Any health-related decision is a personal one that involves balancing risks and benefits. At Susan G. Komen for the Cure®, we encourage all women to follow the recommended breast cancer screening guidelines based upon age, personal risk and physician recommendation. These include getting a mammogram every year starting at age 40 for women at average risk and getting specific screening recommendations from physicians for women at higher risk. We also encourage women to discuss these guidelines with their health care providers, and if they are concerned about overdiagnosis or the value of a screening test, to raise these concerns. Ultimately, each woman needs to make medical decisions that are in keeping with her values and, ideally, maximize her chance of having a long and healthy life.

Dr. Winer even mentions a study that I blogged about a few years back that found that as many as one in three mammography-detected breast cancers might be overdiagnosed. To be objective and fair, Woloshin and Schwartz really should have at least made a brief mention of the other material on Komen’s website to put their criticism of the Komen ad pictured above in proper context. That they did not suggests to me that they might have an agenda with respect to Komen that goes beyond this one ad, because in totality the information Komen distributes about mammographic screening is not out of line with what most relevant organizations recommend, and Komen does recognize and deal with the controversy. Woloshin and Schwartz might not agree with Komen’s conclusions, but they seem to attack this one advertisement as a straw man representing their perception of what Komen actually says about mammography.

Be that as it may, my guess—and, again, this is just a guess—is that Komen fell into the trap of looking for simple messages combined with the lack of understanding of why survival statistics are not a valid measure of the efficacy of a screening test in preventing death from cancer. In that, Komen is not alone. Indeed, as I mentioned above, if you really want to see arguments even worse than the one in the advertisement above, look no further than the American College of Radiology:

The USPSTF agrees that mammography screening saves lives beginning at age 40. They used the smallest possible decrease in deaths (15%) that can be derived from the most rigorous scientific studies called randomized, controlled trials (RCT). As a result of not having experts on the Panel, they did not realize that the RCT are designed in such a way that they underestimate the benefit of screening so that 15% was the very lowest benefit. They ignored the other scientific evidence that shows that the benefit is at least twice that. In the United States the death rate from breast cancer was unchanged for 50 years prior to the onset of mammography screening in the mid 1980’s. Soon after, the death rate began to decrease, and since 1990 it has declined by 30%. This is not a victory over breast cancer, but a remarkable achievement. The USPSTF not only agreed that the benefit applied to women in their forties, but that the decrease in deaths has been even higher among these women. Relying on computer models (sophisticated financial computer models failed to predict the economic crash), they ignored direct studies from the Netherlands and Sweden that showed that most of the decrease in deaths was due to mammography screening and not new therapy. In Sweden, where women take fuller advantage of screening, the death rate had decreased by over 40% in several studies. Using these figures, instead of the 15%, women in their forties would have been well within the threshold that the Task Force had, arbitrarily, set for supporting screening.

Note the poisoning of the well (the reference to financial computer models, which is irrelevant to whether the models used by the USPSTF are valid). Note the blatant confusing of correlation with causation. Just because a decline in breast cancer mortality began around the same time mammographic screening programs became widespread does not mean that it was the mammographic screening that caused it. Without knowing that the specific studies to which the statement refers, I can’t analyze them, but I do know that the latst studies from that part of the world, if anything, actually suggest that the vast majority of the decline in breast cancer mortality is due to better treatment. Also, it was during that same time that results of the NSABP trials confirmed that adjuvant chemotherapy results in a significant reduction in breast cancer mortality and as a result its use became widespread. The result has been a major decline in breast cancer mortality due to the use of adjuvant chemotherapy. Finally, note the complete misunderstanding, whether willful or not, of what RCTs actually do. It’s ridiculous to point out that RCTs are designed to underestimate benefits; that statement is so wrong that it’s not even wrong. The correct way to state the concept is that RCTs are designed to eliminate biases that tend to lead to overestimates of effect sizes. There’s a huge difference. The ACR seems to be arguing that we should rely on less rigorous studies because they show larger effect sizes. Regular readers know who often makes arguments of this sort.

In fact, the wag in me can’t help but wonder if Woloshin and Schwartz will take on the ACR next. I’d love to see that.

My usual sarcasm aside, the “overselling” of screening tests is widespread, and it’s not just Komen that can be accused of having at times done it. Moreover, contrary to the claims of quacks like Mike Adams that Komen is lying and perpetrating a fraud and that mammograms kill women, it’s almost certainly not due to malice or intentional distortion, but rather from a genuine belief that the tests save lives coupled with a lack of understanding of the issues involved in determining whether a given screening test actually achieves what its proponents claim it can do. Science is messy, and the science of screening tests is among the messiest. I can only hope that Komen learns from this and doesn’t use the same ad this October.