Month: March 2012

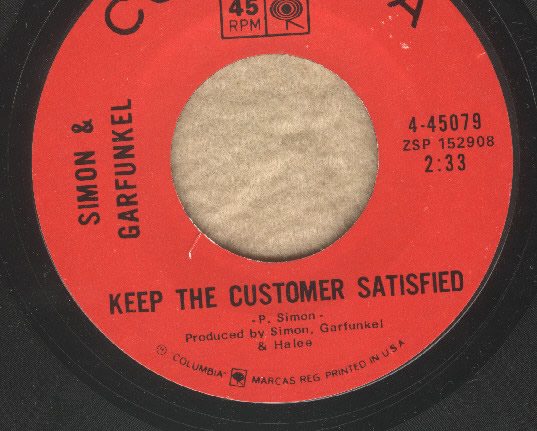

Keeping the customer satisfied

These days patient satisfaction is becoming more and more important in judging how well hospitals and physicians are doing. Indeed, Medicare reimbursements are linked in part to a patient satisfaction survey administered by the government. The underlying assumption is that patient satisfaction correlates with high quality care, But is that true? I have my doubts. Indeed, there is evidence that, at best,...

An Appraisal of Courses in Veterinary Chiropractic

Today’s guest article, by By Ragnvi E. Kjellin, DVM, and Olle Kjellin, MD, PhD, was submitted to a series of veterinary journals, but none of them wanted to publish it. ScienceBasedMedicine.org is pleased to do so. Animal chiropractic is a relatively new phenomenon that many veterinarians may know too little about. In Sweden, chiropractic was licensed for humans in 1989, but...

Adherence: The difference between what is, and what ought to be

One of the most interesting aspects of working as a community-based pharmacist is the insight you gain into the actual effectiveness of the different health interventions. You can see the most elaborate medication regimens developed, and then see what happens when the “rubber really hits the road”: when patients are expected to manage their own treatment plan. Not only do we get...

Acupuncture for Migraine

A recent study looking at acupuncture for the prevention of migraine attacks demonstrates all of the problems with acupuncture and acupuncture research that we have touched on over the years at SBM. Migraine is one indication for which there seems to be some support among mainstream practitioners. In fact the American Headache Society recently recommended acupuncture for migraines. Yet, the evidence is...

Brief Update: Protandim

I’ve already devoted more time to Protandim than it deserves. I’ve written about it twice on SBM: here and here . But I can’t resist covering a new Protandim study that not only serves as a bad example but that made me laugh. Protandim is a mixture of 5 herbal supplements intended to upregulate the body’s own production of antioxidants. Its patent...

An antivaccine tale of two legal actions

I don’t know what it is about the beginning of a year. I don’t know if it’s confirmation bias or real, but it sure seems that something big happens early every year in the antivaccine world. Consider. As I pointed out back in February 2009, in rapid succession Brian Deer reported that Andrew Wakefield had not only had undisclosed conflicts of interest...

The Application of Science

It all seemed so easy In 2010 an article was published in the New England Journal of Medicine, Preventing Surgical-Site Infections in Nasal Carriers of Staphylococcus aureus . Patients were screened for Staphylcoccus aureus ( including MRSA, methicillin resistant Staphylococcus aureus) and those that were positive underwent a 5 day perioperative decontamination procedure with chlorhexidine baths and an antibiotic, mupirocin, in the...

Help a reader out: Abstracts that misrepresent the content of the paper

Earlier this week, a reader of ours wrote to Steve and me with a request: First off, I just want to say thank you for everything you gentlemen do. I find that your sites are extremely helpful when trying to figure out what level of information is BS, and what is real. In short, I was wondering if either of you two...

FDA versus Big Supp: Rep. Burton to the Rescue (Again)

The Dietary Supplement Health and Education Act of 1994 (DSHEA) has been aptly described here at SBM as a travesty of a mockery of a sham. The supplement industry’s slick marketing, herb adulteration due to lack of pre-market controls, Quack Miranda Warning, and the many supplements for which claims of effectiveness failed to hold up under scientific scrutiny (e.g., antioxidants, collagen, glucosamine...

Anti-Smoking Laws – The Proof of the Pudding

One consistent theme of SBM is that the application of science to medicine is not easy. We are often dealing with a complex set of conflicting information about a complex system that is difficult to predict. That is precisely why we need to take a thorough and rigorous approach to information in order to make reliable decisions. The same is true when...