Part of the mission of SBM is to continually prod discussion and examination of the relationship between science and medicine, with special attention on those beliefs and movements within medicine that we feel run counter to science and good medical practice. Chief among them is so-called complementary and alternative medicine (CAM) – although proponents are constantly tweaking the branding, for convenience I will simply refer to it as CAM.

Within academia I have found that CAM is promoted largely below the radar, with the deliberate absence of public debate and discussion. I have been told this directly, and that the reason is to avoid controversy. This stance assumes that CAM is a good thing and that any controversy would be unjustified, perhaps the result of bigotry rather than reason. It’s sad to see how successful this campaign has been, even among my fellow academics and scientists who should know better.

The reality is that CAM is fatally flawed in both philosophy and practice, and the claims of CAM proponents wither under direct light. I take some small solace in the observation that CAM is starting to be the victim of its own success – growing awareness of CAM is shedding some inevitable light on what it actually is. Further, because CAM proponents are constantly trying to bend and even break the rules of science, this forces a close examination of what those rules should actually be, how they work, and their strengths and weaknesses.

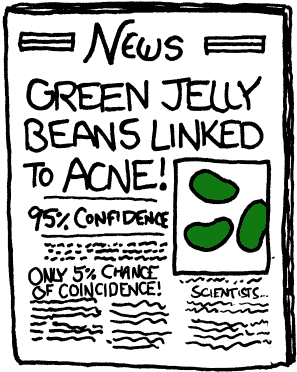

This brings me to the specific topic of this article – the dreaded p-value. The p-value is a frequentist statistical measure of the data of a study. Unfortunately it has come to be looked at (by non-statisticians) as the one measure of whether or not the phenomenon being studied is likely to be real, even though that is not what it is and is never what it was meant to be.

As an aside, this trend was likely driven by the need for simplicity. People want there to be one simple bottom line to a study, so they treat the p-value that way. It’s like evaluating the power of a computer system solely by its clock speed, one number, rather than considering all the components.

A recent paper by Pandolfi and Carreras nicely deconstructs the myth of the p-value and show how p-value abuse is especially problematic within the world of CAM (thanks to Mark Crislip for bringing this paper to my attention) – “The faulty statistics of complementary alternative medicine (CAM)“.

For background, the p-value is the probability that the data in an experiment would demonstrate as much or more of a difference between the intervention and control give the null hypothesis. In clinical studies we can rephrase this to: what is the probability that the treatment group would be as different from the control group or more assuming the treatment has no actual effect? Many people, however, misinterpret the p-value as meaning – what is the probability that the treatment works.

Pandolfi and Carreras correctly point out that this is committing a formal logical fallacy, the fallacy of the transposed conditional. To illustrate this they give an excellent example. The probability of having red spots in a patient with measles is not the same as the probability of measles in someone who has red spots.

In other words, the p-value tells us the probability of the data given the null hypothesis, but what we really want to know is the probability of the hypothesis given the data. We can’t reverse the logic of p-values simply because we want to.

No worries – Bayes Theorem comes to the rescue. This is precisely why we at SBM have largely advocated taking a Bayesian approach to scientific questions. A Bayesian approach is ironically how people generally operate. We have prior beliefs about the world, and we update those beliefs as new information comes in (unless, of course, we have an emotional attachment to those prior beliefs, but that’s another article).

In science the logic of the Bayesian analysis is essentially: establish a prior probability for the hypothesis, then look at the new data and calculate how that new data affects the prior probability, giving a post probability.

Pandolfi and Carreras point out that, ironically, this is how doctors function in everyday clinical thinking. When we see a patient we determine the differential diagnosis, a list of possible diagnoses from most likely to least likely. When we order a diagnostic test for a specific diagnosis on the list, we first consider the pre-test probability of the diagnosis. This is based upon the prevalence of the disease and how closely the patient matches the demographics, signs, and symptoms of that disease. We then apply a diagnostic test that has a certain specificity and sensitivity, and based on the results we determine the posterior probability of the diagnosis.

Therefore, the pre-test probability is essential to determining the likelihood that a diagnostic test is either a false positive vs a true positive, or a false negative vs a true negative. You can’t properly interpret the results of the test without knowing the pre-test probability.

Ironically, this logic is abandoned when evaluating scientific research. In fact, the main flaw in the way evidence-based medicine is applied is that it ignores the pre-test probability, and relies heavily on an indirect measure (the p-value) in isolation to interpret test results. If applied to clinical medicine, such a process would constitute gross malpractice.

To drive this point home a little further, using a p-value in isolation in a clinical study to determine if the phenomenon under study is real is like using a non-specific diagnostic test to determine that a patient has a very rare disease, ignoring predictive value and the possibility of a false positive test. As experienced clinicians understand, if a disease is truly rare, then even a reasonably specific test is far more likely to generate a false positive than a true positive.

The analogy here is this – when studying a phenomenon that is unlikely, a significant p-value is far more likely to be a false positive than a true positive. This is why p-values are especially problematic when applied to CAM.

CAM modalities are alternative largely because they did not emerge from mainstream scientific thinking. In many cases, the claims made are incompatible with modern science. Homeopathy, for example, would require rewriting the physics, chemistry, physiology, and biology textbooks to a significant degree. Apparently violating basic laws of science at the very least renders a hypothesis equivalent to a rare disease – having a low prior probability. Therefore, even with an impressive looking p-value of 0.01, the probability could still be overwhelming that the phenomenon being tested is not real and the outcome is a false positive.

Conclusion

The Pandolfi and Carreras paper nicely illustrates one of the core principles of science-based medicine – putting the science back into medicine. Evidence is not enough, we also have to put that evidence into the context of our basic scientific understanding of the world, expressed as a prior probability. It may not be possible to have a rigorous quantitative expression of that prior probability, but we can at least use representative figures.

For example, Pandolfi and Carreras use a prior odds of 9:1 against to represent the skeptical position. This is being generous, in my opinion, as I would give odds of 999:1 at least for claims such as homeopathy. But even using the highly conservative odds of 9:1, even a p-value of 0.01 does not favor the phenomenon being real, but rather the null hypothesis.

The two take-home messages here are these: Don’t rely on p-values as the sole measure of a study’s outcome. Favor, rather, a Bayesian analysis. Even in the absence of a formal Bayesian analysis, an informal Bayesian approach will help put the study results into context.

Second – we should probably raise the bar for statistical significant. A p-value of 0.05 is not as impressive as most people might think. Mark suggested we set the bar at 0.001 as a first approximation, and then we go down from there based upon prior probability.

Doing this will work massively against the interests of CAM, because of their low prior probability. But this is in the interests of good medicine.