Category: Clinical Trials

Cochrane Reviews: The Food Babe of Medicine?

There are two topics about which I know a fair amount. The first is Infectious Disease. I am expert in ID, Board Certified and certified bored, by the ABIM. The other, although to a lesser extent, is SCAMs. When I read the literature on these topics, I do so with extensive knowledge and, in the case of ID, 30 years of clinical...

The Compassionate Freedom of Choice Act: Ill-advised “right to try” goes federal

Not too long ago, I expressed alarm at a series of bills that were popping up like so much kudzu in various state legislatures, namely “right to try” bills. Both Jann Bellamy and I warned that these bills gave a false illusion of hope to patients with terminal illnesses. Basically, these laws claim to grant the “right” of patients with terminal illnesses...

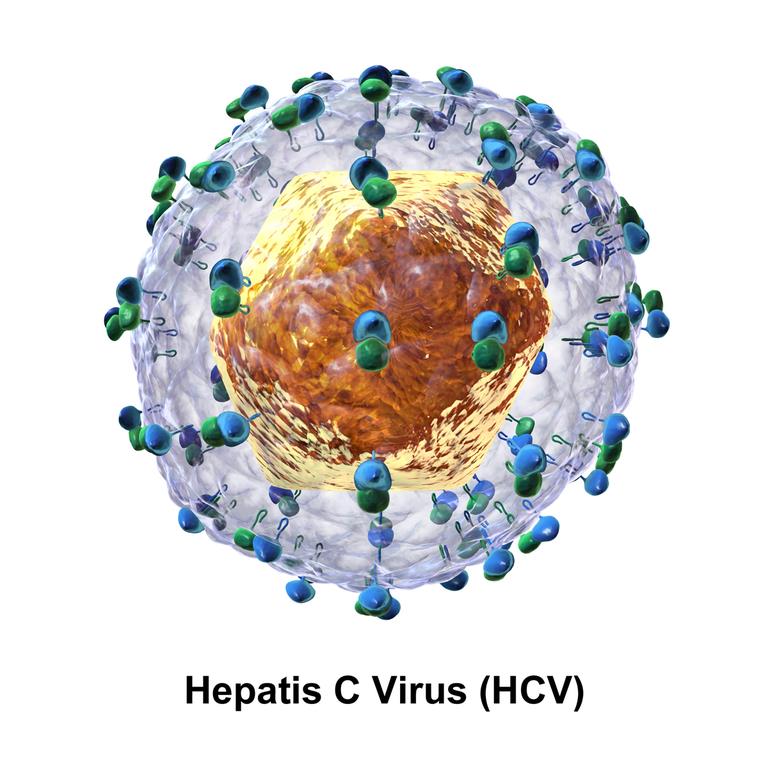

Curing Hepatitis C: A Success Story and a Price Tag

Previously incurable, hepatitis C now has a treatment. A very, very expensive treatment. But there is hope for acutely and chronically infected people.

Gluten-free skin and beauty products: Extracting cash from the gullible

Gluten-free doesn't make sense for a lot of diets. Except for lip balm and toothpaste, it makes an order of magnitude less sense for beauty products.

New evidence, same conclusion: Tamiflu only modestly useful for influenza

Does Tamiflu have any meaningful effects on the prevention or treatment of influenza? Considering the drug’s been on the market for almost 15 years, and is widely used, you should expect this question has been answered after 15 flu seasons. Answering this question from a science-based perspective requires three steps: Consider prior probability, be systematic in the approach, and get all the...

“Right to try” laws and Dallas Buyers’ Club: Great movie, terrible for patients and terrible policy

One of my favorite shows right now is True Detective, an HBO show in which two cops pursue a serial killer over the course of over 17 years. Starring Woody Harrelson and Matthew McConaughey, it’s an amazingly creepy show, and McConaughey is amazing at playing his character, Rustin Cohle. I’m sad that the show will be ending tomorrow, but I really do...

Acupuncture Vignettes

I seem to be writing a lot about acupuncture of late. As perhaps the most popular pseudo-medicine, there seems to be more published on the topic. I have a lot of internet searches set up to automatically feed me new information on various SCAMs. Interestingly, all the chiropractic updates seem to be published on chiropractic economics sites, not from scientific sources. Go...

The illusions of “right to try” laws

[Ed. Note: For additional commentary on why “right-to-try” laws are such a bad idea, see “Right to try” laws and Dallas Buyers’ Club: Great movie, terrible for patients and terrible policy and The false hope of “right-to-try” metastasizes to Michigan.] There is nothing like a touching anecdote to spur a politician into action. And those who want to try investigational drugs outside...

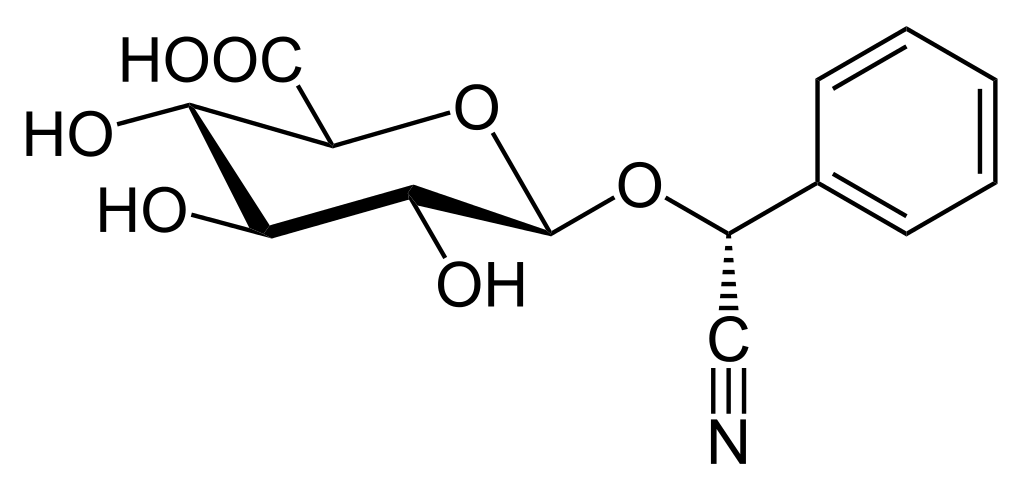

Eric Merola and Ralph Moss try to exhume the rotting corpse of Laetrile in a new movie

Note: Some of you have probably seen a different version of this post fairly recently. I have a grant deadline this week and just didn’t have time to come up with fresh material up to the standards of SBM. This left me with two choices: Post a “rerun” of an old post, or recycle something. I decided to recycle something for reasons...