Category: Diagnostic tests & procedures

Is Midstream Urine Collection Necessary?

There's considerable evidence that the standard procedure for urine specimens, with cleansing and mid-stream collection, may not be necessary. Is it time to change?

There is no COVID-19 “casedemic.” The pandemic is real and deadly.

Antivaccine activists and pandemic minimizers Del Bigtree and Joe Mercola are promoting the myth of the "casedemic" that claims that the massive increase in COVID-19 cases being reported is an artifact of increased PCR testing and false positives due to too sensitive a threshold to the test. As they have done for vaccines and vaccine-preventable diseases many times before, they are vastly...

Trump administration announces some COVID-19 tests can skip FDA review, providing new opportunities for dubious lab tests

The Trump administration unexpectedly announced that the FDA will no longer regulate some lab tests, including those for COVID-19. In addition to potentially allowing unreliable COVID tests on the market, the decision creates an opening for more bogus CAM tests.

Is Magnesium the Underlying Cause and Treatment for Everything?

Carolyn Dean believes magnesium deficiency is the cause of a great many diseases and recommends that everyone take magnesium supplements, preferably the one she sells, ReMag. I remain skeptical.

Don’t Believe What You Think

A new book by Edzard Ernst provides a concise course in critical thinking as well as a wealth of good science-based information to counter the widespread misinformation about SCAM.

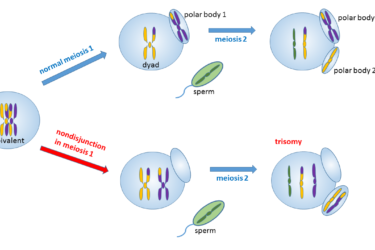

Prenatal Screening Tests for Chromosome Abnormalities

Science has made remarkable advances in prenatal screening, but false positives and false negative results can occur, and there are ethical concerns.

The Wisdom of Third Molar Removal

In the Olden Times (ten or so years ago), the indication for third molar (aka “wisdom teeth”) removal was the presence of wisdom teeth. Now, oral surgeons are rethinking things.

“Personalized” dietary recommendations based on DNA testing: Modern astrology

GenoPalate is a company that claims to give "personalized" dietary recommendations based on DNA testing. Unfortunately, what is provided by such companies is more akin to astrology than science.

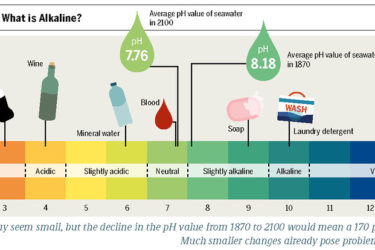

Skin pH: Salesmanship, Not Science

People are being encouraged to worry about the pH of their skin and to try to change it. These concerns and interventions are not supported by scientific evidence.