A new website came to my attention that promises to “Discover what truly works.” The idea is to essentially crowdsource anecdotal reports about what treatments work for specific conditions.

A new website came to my attention that promises to “Discover what truly works.” The idea is to essentially crowdsource anecdotal reports about what treatments work for specific conditions.

This is an interesting idea, that can harness the power of information flow over the internet to do what is essentially an observational, uncontrolled, and unscientific study about treatment effects. At its best, this type of information would consist of what we call a pragmatic study – an open-label study of the real-world application of specific treatments.

Pragmatic studies have their place – they add information about the practical applicability of various treatments. Pragmatic studies, however, are not efficacy trials – they cannot and should not be used to make efficacy claims. It has recently come into vogue for proponents of various CAM treatments to rely on pragmatic studies to make efficacy claims, when actual efficacy trials have failed to show effectiveness.

Pragmatic studies, in other words, should be conducted only with proven therapies that have already demonstrated efficacy.

The information that would be generated by CureCrowd, however, is more problematic than a well-conducted pragmatic study. A good pragmatic study should, at least, be systematic – looking at consecutive patients in a practice, for example. Online surveys, however, are self-selective. This introduces selection bias which may overwhelm the results. Therefore the information may tell us about what people feel motivated to report, not actual results.

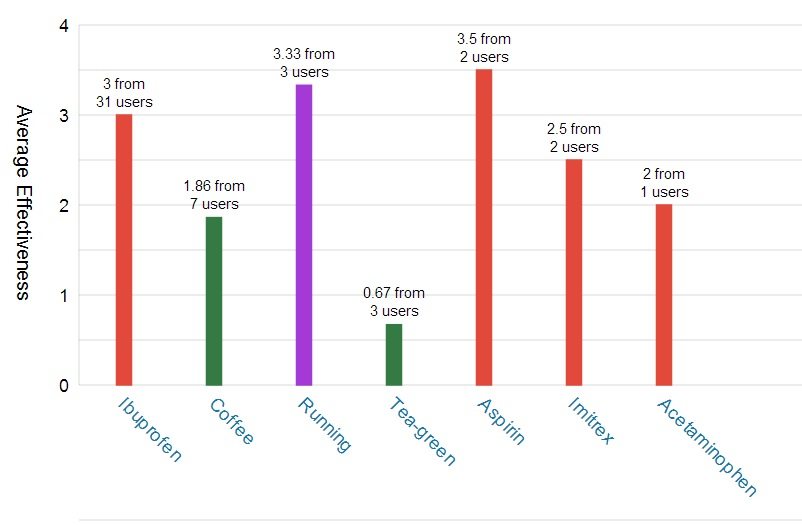

It does, however, give the illusion of useful information. Take a look at the graph at the top of this post – results so far for headache (the site is just getting started and so the information is still scant). I treat headaches so I can put this information into context. You can see that caffeine is reported as reasonably effective. However, it’s possible that for most people who believe caffeine treats their headaches, they are just treating headaches caused by caffeine withdrawal. For most people with recurrent headaches it is best to completely avoid caffeine, but this chart may make them think caffeine is an effective treatment, leading to more consumption.

I should also point out that “headache” is a problematic category. There are many headache types, such as migraine vs. tension headache. This information is not useful without knowing what kind of headache the different users were treating. This brings up the broader problem with using an online survey for medical information – who verifies the diagnosis being treated?

Conclusion

I do think that using the internet to generate large amounts of possibly-useful medical data is an interesting idea which could have some potential. But there are many pitfalls. Just gathering large amounts of data will not necessarily tell us anything useful, and in fact may be misleading. It can give the false sense of useful information even when results might be grossly misleading.

Treatment effects are particularly problematic, as they are susceptible to placebo effects and a host of perception and reporting biases.

Scientists use uncontrolled observational studies all the time, but they have to be put into their proper context. They are useful for generating hypotheses, not so much for definitively testing hypotheses. They are also useful follow-up data to see how proven-effective treatments work in real-world settings.

An online survey might generate some interesting data, but I don’t think it can be relied upon to “figure out what really works.” The fatal flaw in this approach is the self-selective nature of the information.

My major concern about this type of project is that it is designed to give information back to users, with the promise that this information will be useful. Rather, it would be more legitimate to use such a website, at least initially, as an experiment itself. First let’s validate this type of information. The online surveys could be done and the information generated could be compared to controlled efficacy data. If the results track well with controlled data (where such data is available) that would at least tell us something.

A great deal more thought needs to go into this type of crowdsourcing, such as how to capture more accurate and detailed diagnostic information. “Headache” is an ultimately useless category. Users should at least be given the option of filling out a standard headache questionnaire, and that information can be analyzed separately.

Using online methods for capturing health information is a potentially useful new tool for medical scientists, but a great deal of thought and analysis needs to be done to develop such tools before they will be actually useful. Treatment information is particularly difficult. Giving such raw data back to users, at this stage, may be more harmful than beneficial.