Red meat causes cancer. No, processed meat causes cancer. OK, it’s both red meat and processed meat. Wait, genetically modified grain causes cancer (well, not really). No, aspartame causes cancer. No, this food coloring or that one causes cancer.

Clearly, everything you eat causes cancer!

That means you can avoid cancer by avoiding processed meats, red meat, GMO-associated food (no, probably not), aspartame, food colorings, or anything “unnatural.” Or so it would seem from reading the popular literature and sometimes even the scientific literature. As I like to say to my medical students, life is a sexually transmitted fatal disease that gets us all eventually, but most of us would like to delay the inevitable as long as possible and remain as healthy as possible for as long as possible. One of the most obvious ways to do accomplish these twin aims is through diet. While the parameters of what constitutes a reasonably healthy diet have been known for decades, diet still ranks high on the risk of concerns regarding actions we take on a daily basis that can increase our risk of various diseases. Since cancer is disease (or, I should say, cancers are diseases) that many, if not most, people consider to be the scariest, naturally we worry about whether certain foods or food ingredients increase our risk of cancer.

Thus was born the field of nutritional epidemiology, a prolific field with thousands of publications annually. Seemingly, each and every one of these thousands of publications gets a news story associated with it, because the media love a good “food X causes cancer” or “food Y causes heart disease” story, particularly before the holidays. As a consequence, consumers are bombarded with what I like to call the latest health risk of the week, in which, in turn, various foods, food ingredients, or environmental “toxins” are blamed and exonerated for a panoply of health problems, ranging from the minor to the big three, cardiovascular disease, diabetes, and cancer. It’s no wonder that consumers are confused, reacting either with serial alarm at each new “revelatory” study or with a shrug of the shoulders as each new alarm joins other alarms to produce a tinnitus-like background drone. Unfortunately, this cacophony of alarm also provides lots of ammunition to quacks, cranks, and crackpots to tout their many and varied diets that, they promise, will cut your risk of diseases like cancer and heart disease to near zero—but only if adhered to with monk-like determination and self-denial. (Yes, I’m talking about you, Dean Ornish, among others.)

All of this is why I really wanted to write about an article I saw popping up in the queue of articles published online ahead of print about a month ago. Somehow, other topics intervened, as did my vacation and then the holidays, and somehow I missed it last week, even though a link to the study sits in my folder named “Blog fodder.” Fortunately, it just saw print this week in its final version, giving me an excuse to make up for my oversight. It’s a study by one of our heroes (despite his occasional misstep) here on the SBM blog, John Ioannidis. It comes in the form of a study by Jonathan D. Schoenfeld and John Ioannidis in the American Journal of Clinical Nutrition entitled, brilliantly, Is everything we eat associated with cancer? A systematic cookbook review.

Now, when John Ioannidis says a “systematic cookbook review,” he really means “a systematic cookbook review.” Basically, he and Schoenfeld went through The Boston Cooking School Cookbook and tested whether various ingredients described and used in the recipes in the cookbook had been tested for an association with cancer and what was found. What I love about John Ioannidis is embodied in the methodology of this study:

We selected ingredients from random recipes included in The Boston Cooking-School Cook Book (28), available online at http://archive.org/details/bostoncookingsch00farmrich. A copy of the book was obtained in portable document format and viewed by using Skim version 1.3.17 (http://skim-app.sourceforge. net). The recipes (see Supplementary Table 1 under “Supplemental data” in the online issue) were selected at random by generating random numbers corresponding to cookbook page numbers using Microsoft Excel (Microsoft Corporation). The first recipe on each page selected was used; the page was passed over if there was no recipe. All unique ingredients within selected recipes were chosen for analysis. This process was repeated until 50 unique ingredients were selected.

We performed literature searches using PubMed (http://www. ncbi.nlm.nih.gov/pubmed/) for studies investigating the relation of the selected ingredients to cancer risk using the following search terms: “risk factors”[MeSH Terms] AND “cancer”[sb] AND the singular and/or plural forms of the selected ingredient restricted to the title or abstract. Titles and abstracts of retrieved articles were then reviewed to select the 10 most recently published cohort or case-control studies investigating the relation between the ingredients and cancer risk. Ingredient derivatives and components (eg, orange juice) and ingredients analyzed as part of a broader diet specifically mentioned as a component of that diet were considered. Whenever ,10 studies were retrieved for a given article, an attempt was made to obtain additional studies by searching for ingredient synonyms (eg, mutton for lamb, thymol for thyme), using articles explicitly referred to by the previously retrieved material, and broadening the original searches (searching simply by ingredient name AND “cancer”).

How can you not love this guy?

His results were also not that surprising. First, he noted that for 80% of the ingredients his methodology identified there was at least one study examining its cancer risk. That’s forty ingredients, which he helpfully lists: veal, salt, pepper spice, flour, egg, bread, pork, butter, tomato, lemon, duck, onion, celery, carrot, parsley, mace, sherry, olive, mushroom, tripe, milk, cheese, coffee, bacon, sugar, lobster, potato, beef, lamb, mustard, nuts, wine, peas, corn, cinnamon, cayenne, orange, tea, rum, and raisin. He also notes that these ingredients represent many of the most common sources of vitamins and nutrients in a typical US diet. In contrast, the ten ingredients for which no study was identified tended to be less common: bay leaf, cloves, thyme, vanilla, hickory, molasses, almonds, baking soda, ginger, and terrapin. One wonders how almonds aren’t considered under nuts, but that’s just me being pedantic.

One also wonders whether “Dr.” Robert O. Young, alkalinization quack extraordinaire, who claims that cancer is due to too much acid and that alkalinization is the answer, has heard about no studies for baking soda, which to him, along with a vegan-based diet that is allegedly “alkalinizing,” is part of the “alkaline cure” for everything from cancer to sepsis to heart disease. Now, if you type “baking soda cancer” into the PubMed search box, you’ll actually pull of over 350 articles. However, none of them appear to be epidemiological studies. This reminds me. I need to look into that literature and do a post about it again. But I digress.

Let’s get back to the meat of the study itself. (Sorry, I couldn’t resist.)

Having identified the studies and meta-analyses, Schoenfeld and Ioannidis next extracted the data from the studies, looked at the quality of the studies, the size and significance of the effects reported, how specific they were, and whether there were indications of bias in any of them. The results they found were all over the map, with about the same number of studies finding increased compared to decreased risks of malignancy, and, as before, Schoenfeld and Ioannidis provide a helpful list:

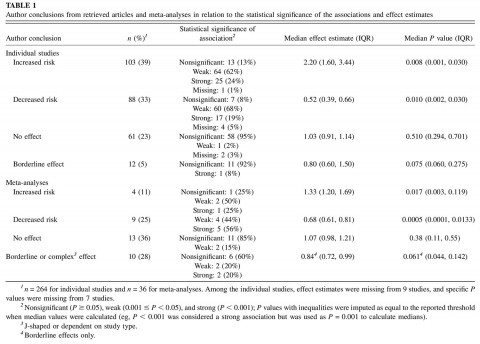

Author conclusions reported in the abstract and manuscript text and relevant effect estimates are summarized in Table 1. Thirty-nine percent of studies concluded that the studied ingredient conferred an increased risk of malignancy; 33% concluded that there was a decreased risk, 5% concluded that there was a borderline statistically significant effect, and 23% concluded that there was no evidence of a clearly increased or decreased risk. Thirty-six of the 40 ingredients for which at least one study was identified had at least one study concluding increased or decreased risk of malignancy: veal, salt, pepper spice, egg, bread, pork, butter, tomato, lemon, duck, onion, celery, carrot, parsley, mace, olive, mushroom, tripe, milk, cheese, coffee, bacon, sugar, lobster, potato, beef, lamb, mustard, nuts, wine, peas, corn, cayenne, orange, tea, and rum.

The statistical support of the effects was weak (0.001 # P , 0.05) or even nonnominally significant (P . 0.05) in 80% of the studies. It was also weak or nonnominally significant, even in 75% of the studies that claimed an increased risk and in 76% of the studies that claimed a decreased risk (Table 1).

To boil it all down, what we have here are a bunch of studies that report an association between a food or food ingredient and either increased or decreased risk of various cancers, but the vast majority of them have very weak effect sizes and/or nominal statistical significance. Next, Schoenfeld and Ioannidis looked at ingredients for which meta-analyses existed and found 36 relevant effect size estimates based on meta-analyses. Many of these meta-analyses combined studies using different exposure contrasts, such as highest quartile/lowest quartile versus another measure, such as the highest versus lowest consumption. Only 13 meta-analyses were done by combining data on the same exact contrast across all studies. As one would expect when one pools diverse studies, meta-analyses tended to find smaller effects than individual studies, as shown in the following table from the paper (click to embiggen):

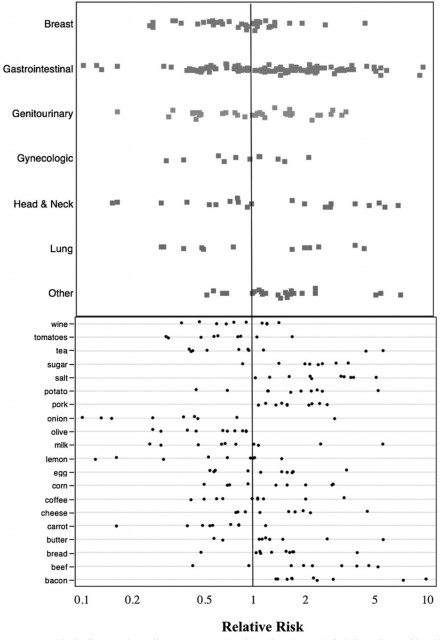

As I said, these findings are all over the map. Now, if you really want to see how chaotic the literature examined by Schoenfeld and Ioannidis is, take a look at the effect sizes found for ingredients for which there have been more than ten studies:

As you can see, it’s quite complex and all over the map, although there are a few ingredients for which the studies do appear to be fairly consistent, such as bacon being associated consistently with a higher risk of cancer. Bummer. Or is it? Let’s continue with Schoenfeld and Ioannidis’ analysis. What they found is rather disturbing. First, they report that although 80% of the studies they examined reported positive findings (i.e., a positive association between the food or ingredient studied and some form of cancer):

…the vast majority of these claims were based on weak statistical evidence. Many statistically insignificant “negative” and weak results were relegated to the full text rather than to the study abstract. Individual studies reported larger effect sizes than did the meta-analyses. There was no standardized, consistent selection of exposure contrasts for the reported risks. A minority of associations had more than weak support in meta-analyses, and summary effects in meta-analyses were consistent with a null average and relatively limited variance.

In other words, there are lots of studies out there that claim to find a link, either for increased risk or a protective effect, between this food or that ingredient and cancer, but very few of them actually provide convincing support for their hypothesis. Worse, there appear to be a lot of manuscript-writing shenanigans going on, with the abstract (which usually means, I note, the press release) touting a strong association while the true weakness of the association is buried in the fine print in the results or discussion sections of the paper. Given that most scientists tend not to read each and every word of a paper unless they’re very interested in it or it’s highly relevant to their research, this deceptive practice can leave a false impression that the reported association is stronger than it really is. Yes, I realize that we as scientists should probably be more careful, but a combination of time pressures and the enormous volume of literature out there make it difficult to do so. Moreover, as Schoenfeld and Ioannidis note in their discussion, although they didn’t cover the entire nutritional epidemiology literature for all these ingredients (which would be virtually impossible), their search strategy was “representative of the studies that might be encountered by a researcher, physician, patient, or consumer embarking on a review of this literature.” In other words, they tried to search the literature in a way that would bring up the most commonly cited recent studies.

The effect is likely to be even worse for the lay public, who get information filtered through press releases that tend to amplify the same problem (lack of proper caveats and discussion of how nominal or marginal the association actually is when examined critically). After all, university press offices are not generally known for their nuance, and reporters tend to want to cover stories they deem interesting. Marginal studies are less interesting than a study with a strong, robust result. So studies all too often tend to be presented as though they have a strong, robust result, even when they don’t. This is facilitated by another problem observed by Ioannidis, namely the wide variety and inconsistency in definitions and exposure contrasts. This, argue Schoenfeld and Ioannidis, make it easier for reporting biases to creep into the literature.

There’s also the issue of reporting bias, which could easily be more acute in nutritional epidemiology. The reason is that there is, understandably, a huge public interest in what sorts of foods might either be protective against or cause cancer. In an accompanying editorial, Michelle M. Bohan Brown, Andrew W Brown, and David B Allison, while noting that Schoenfeld and Ioannidis’ work does indeed suggest that everything we eat is associated with cancer in some way, also note that their study reports evidence of publication bias:

They [Schoenfeld and Ioannidis] found that almost three-fourths of the articles they reviewed concluded that there was an increased or decreased risk of cancer attributed to various foods, with most evidence being at least nominally significant. It appears, then, that according to the published literature almost everything we eat is, in fact, associated with cancer. However, Schoenfeld and Ioannidis proceeded to show that biases exist in the nutrient-cancer literature. The fidelity of research findings between nutrients and cancer may have been compromised in several ways. They identified an overstating of weak results (most associations were only weakly supported), a lack of consistent comparisons (inconsistent definitions of exposure and outcomes), and possible suppression of null findings (a bimodal distribution of outcomes, with a noticeable lack of null findings).

Brown et al note that the sources of these sorts of biases have been well known for a long time and can lead to self-deception. The types of bias most responsible for positive findings appear to include what is known as “white hat bias” (“bias leading to distortion of research-based information in the service of what may be perceived as ‘righteous ends’”), confirmation bias (in which overstated results match preconceived views, so that the authors overstating the results don’t adequately consider the weaknesses of their work), and, of course, publication bias. These biased results are then disseminated to the public through the lay media. As Brown et al note:

The implications of Schoenfeld and Ioannidis’ analysis may be important for nutritional epidemiology even more broadly. Numerous food ingredients are thought to have medicinal properties that are not sufficiently supported by current knowledge—for example, coffee “curing” diabetes (11). These distortions can also be used to demonize foods, as shown by the longstanding presumption that dietary cholesterol in eggs contributes to heart disease (12). Causative relations between various foods and diseases likely do exist, but the evidence for many relations is weak, although conclusions about these relations are stated with the certainty one would expect only from the most strongly supported evidence.

Indeed. Another implication, not noted by either Schoenfeld and Ioannidis or Brown et al, is that weak research findings suggesting a link between this food or that food and cancer, not only lead to the inappropriate demonization of some foods but to quackery. Anyone who has been a regular reader of this blog or one of the other blogs of the contributors to SBM, or who has followed the “alternative medicine” online underground will immediately note that quacks frequently tout various “superfoods” or supplements chock full of various food ingredients believed to have protective effects against various diseases as the cure for what ails you, be it cancer, heart disease, diabetes, or whatever. Publishing studies with weak associations tarted up to look stronger than they really are only provides fuel for these quacks. Indeed, there is one site run by a Sayer Ji that devotes much of its news section to publishing stories and abstracts describing such studies. I’m referring to GreenMedInfo, which routinely publishes posts with titles like Avocado: The Fat So Good It Makes Hamburger Less Bad; Black Seed – ‘The Remedy For Everything But Death’; and 5 Food-Medicines That Could Quite Possibly Save Your Life. And that’s just one example. Mike Adams, for instance, does the same sort of thing, just yesterday posting articles claiming that various foods can “lower blood pressure fast” and that the “Paleo diet” combined with vitamin D and avoiding food additives can reverse multiple sclerosis. Not surprisingly, the same sorts of hyperbole can regularly be found on The Huffington Post, and touted by supplement sellers. Even mainstream media outlets like MSNBC get into the act.

Of course, in all fairness, in science, most studies should just be published and let the chips fall where they may. It’s unfair to blame the authors of nutritional epidemiology studies for how their studies are used and abused. It is not unfair to blame them if they use poor methodology or the strength and significance of their findings in the abstract and hide their weakness by burying it deep in the verbiage of the results or discussion sections. If Schoenfeld and Ioannidis are correct, this happens all too often. Obviously, their sample is a fairly small slice of the nutritional epidemiological literature and as such might not be representative, but given the methods used to choose the studies examined I doubt that if Schoenfeld and Ioannidis had gone up to 100 foods they would have found anything much different.

Unfortunately, in almost the mirror image to the nutrition alarmists, one also sees a disturbing temptation on the part of those who represent themselves as scientific to go too far in the other direction and point to Schoenfeld and Ioannidis’ study as a convenient excuse to summarily dismiss nutritional epidemiological findings linking various foods or types of foods with cancer or other disease. For example, the American Council on Science and Health (ACSH) did just that in response to this study, gloating that it proves that its dismissal of food health scares are insignificant. Well, actually, many scares are insignificant, but surely not all of them are. The ACSH also almost goes into conspiracy theories when it further claims that the mainstream media except for a couple of UK newspapers, such as The Guardian, ignored the study. Although arguably this particular study didn’t get huge coverage, it’s going a bit far to claim that the mainstream media ignored it. It didn’t; the study was featured on Boing-Boing, The Washington Post, Reason, Cancer Research UK, and others. Of course, one can’t help but note that the ACSH is the same organization that has referred to advocating organic foods as “elitist”, likes to disparagingly refer to questions about links between environment and cancer as “chemophobia,” and been very quick to dismiss the possibilities that various chemicals might be linked to cancer, as so famously parodied on The Daily Show. a few years ago. We must resist the temptation to go too far in the opposite direction and reflexively dismiss even the possibility of such risks as the ACSH is wont to do, most famously with pesticides and other chemicals.

Indeed, as was pointed out at Cancer Research UK, the real issue is that individual studies taken in isolation can be profoundly misleading. There’s so much noise and so many confounders to account for that any single study can easily miss the mark, either overestimating or underestimating associations. Given publication bias and the tendency to believe that some foods or environmental factors must cause cancer, it’s not too surprising that studies tend to overestimate effect sizes more often then they underestimate them. Looking through all the noise and trying to find the true signals, there are at least a few foods that are reliably linked to cancer. For instance, alcohol consumption is positively linked with several cancers, including pancreatic, esophageal, and head and neck cancers, among others. There’s evidence that eating lots of fruit and vegetables compared to meat can have protective effects against colorectal cancer and others, although the links are not strong, and processed meats like bacon have been linked to various cancers, although, again, the elevated risk is not huge. When you boil it all down, it’s probably far less important what individual foods one eats than that one eats a varied diet that is relatively low in red meat and high in vegetables and fruits and that one is not obese.

So what can be done? It would be a good thing indeed if the sorts of observations that Ioannidis has made lead to reform. Between Schoenfeld and Ioannidis’ study and the accompanying editorial several remedies have been proposed that are not unlike steps that have been imposed on clinical trials of therapies and drugs: increased use and improvement of clinical trial and observational study registries; making raw data publicly available; making supporting documentation such as protocols, consent forms, and analytic plans publicly available; and mandating the publication of results from human (or animal) research supported by taxpayer funds. I must admit that the last of these is already a requirement, as is registration of all taxpayer-supported clinical trials at ClinicalTrials.gov, but the other proposals could also help.

Finally, I’ll finish with a quote from the editorial by Brown et al:

As Schoenfeld and Ioannidis (6) highlighted, comprehensive approaches to improve reporting of nutrient-disease outcomes could go a long way toward decreasing repeated sensational reports of the effects of foods on health. However, none of these debiasing solutions address the fundamental human need to perceive control over feared events. Although scientists may have ulterior motives for looking for nutrient-disease associations, the public is always the final audience. It is therefore imperative that we spend less time repeating weak correlations and invest the resources to vigorously investigate nutrient-cancer and other disease associations with stronger methodology, so that we give the public lightning rods instead of sending them up the bell tower.

That last remark refers to Ben Franklin’s lightning rod and how churches used to think that ringing bells would protect against lightning strikes when in fact ringing bells only put the bell ringers in danger from lightning, which often struck bell towers because bell towers were usually the highest buildings in most towns. It’s a metaphor that fits, because the superstition about lightning before the lightning rod could divert devastating lightning strikes from bell towers is not unlike the irrational beliefs about food-cancer links that all too often predominate even today. The answer is more rigorous science and less publicizing of weak science.