Tag: Cochrane Reviews

Eye Drops for Dry Eyes

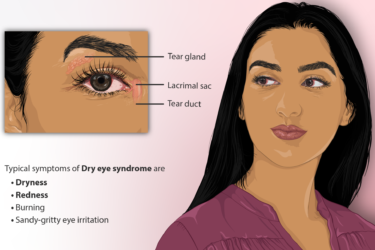

I could have chosen a prescription eye drop for my dry eyes. I decided not to. Here's why.

MMR is Safe and Effective

A new systematic review shows convincingly that the MMR vaccine is safe and effective.

More About Flu Vaccine

More evidence that flu shots work, that they are safe during pregnancy, and that they don't cause autism.

Are the recommended childhood vaccine schedules evidence-based?

We write about vaccines a lot here at SBM, and for a very good reason. Of all the medical interventions devised by the brains of humans, arguably vaccines have saved more lives and prevented more disability than any other medical treatment. When it comes to infectious disease, vaccination is the ultimate in preventive medicine, at least for diseases for which vaccines can...

May the Floss be with you?

I’m beginning this blog post under the assumption that the average Science Based Medicine reader is more intelligent, more motivated, and more health conscious than your typical Jane or Joe Blow. As such, most of you probably visit your friendly neighborhood dentist for cleanings and checkups twice a year just like the toothpaste manufacturers American Dental Association recommends. And if you’re like...

Cochrane Review on Community Water Fluoridation

One of the overriding themes of the Science Based Medicine blog is to use rigorous science when evaluating any health claim – be it medical, dental, dietary, fitness, or any other assertion put forth with the intention of improving one’s health. Once the scientific evidence is evaluated as to efficacy, there are other criteria which must be taken into consideration, such as...

What is Science?

Consider these statements: …there is an evidence base for biofield therapies. (citing the Cochrane Review of Touch Therapies) The larger issue is what constitutes “pseudoscience” and what information is worthy of dissemination to the public. Should the data from our well conducted, rigorous, randomized controlled trial [of ‘biofield healing’] be dismissed because the mechanisms are unknown or because some scientists do not...

Integrative Medicine: “Patient-Centered Care” is the new Medical Paternalism

Integrative Pitchmen Several of us have written about how contemporary quacks have artfully pitched their wares to a higherbrow market than their predecessors were accustomed to, back in the day. Through clever packaging,* quacks today can reasonably hope to become professors at prestigious medical schools, to control and receive substantial grant money from the NIH, to preside over reviews for the Cochrane...

Cochrane is Starting to ‘Get’ SBM!

This essay is the latest in the series indexed at the bottom.* It follows several (nos. 10-14) that responded to a critique by statistician Stephen Simon, who had taken issue with our asserting an important distinction between Science-Based Medicine (SBM) and Evidence-Based Medicine (EBM). (Dr. Gorski also posted a response to Dr. Simon’s critique). A quick-if-incomplete Review can be found here. One...