A point I make over and over again when talking about new or alternative therapies that are not supported by good clinical trial evidence is that lower-level evidence, such as theoretical justifications, anecdotes, and pre-clinical research like in vitro studies and animal model testing, can only be suggestive, never reliable proof of safety or efficacy. It is necessary to begin evaluating a new therapy that does not yet have clinical evidence to support it by showing a plausible theory for why it might work and then moving on to demonstrate that it actually could work through pre-clinical research, which includes biochemistry, cell culture, and animal models. These sorts of supporting preclinical evidence are what we refer to when we refer to the “prior plausibility” of a clinical study. But this kind of evidence alone is not sufficient to support using the therapy in real patients except under experimental conditions, or when the urgency to intervene is great enough to balance the significant uncertainty about the effects of the intervention.

In support of this conclusion, we can consider the inherent unreliability of individual human judgments and all the many ways in which inadequately controlled research can mislead us. And we can reflect on how promising results in early trials often melt away when better, larger, more rigorous studies are done that better control for bias (the so-called Decline Effect). And it is not at all difficult to compile a large list of examples of the harm inadequately studied medical interventions can cause.

But what I’d like to do here is focus on a particularly good specific example of why thorough clinical trial evaluation of promising ideas is not just a nice extra to confirm what we already believe is true, it is the only way to genuinely know whether our treatments to more good than harm.

SELECT: What Is It About?

The Selenium and Vitamin E Cancer Prevention Trial (SELECT) was initiated because, according to the National Cancer Institute (NCI) at the National Institutes of Health (NIH), “Evidence from epidemiologic studies, observational studies, and clinical trials looking at preventing cancers other than prostate cancer had suggested that selenium and/or Vitamin E might prevent prostate cancer.” The NCI describes some of the specific studies that suggested selenium and Vitamin E supplementation might protect against prostate cancer.

Selenium

Selenium is a nonmetallic trace element found in food, especially plant foods such as rice and wheat, seafood, meat, and Brazil nuts. Selenium is an antioxidant and may help control cell damage that could lead to cancer.

The Nutritional Prevention of Cancer (NPC) study, first reported in 1996, included 1,312 men and women who had a history of non-melanoma skin cancer. Results of the trial showed that men who took selenium to prevent new non-melanoma skin cancers received no benefit from selenium in preventing that disease. However, approximately 60 percent fewer new cases of prostate cancer were observed among men who had taken selenium for six and one-half years than among men who took placebo (1). In a 2002 follow-up report, the data showed that men who took selenium for more than seven and one-half years had about 52 percent fewer new cases of prostate cancer than men who took placebo (2). This trial was one of the reasons for studying selenium in SELECT.

Vitamin E

Vitamin E is in a wide range of foods, especially vegetables, vegetable oils, nuts, and egg yolks. Vitamin E, like selenium, is an antioxidant, which may help control cell damage that can lead to cancer.

In the 1998 study of 29,133 male cigarette smokers in Finland (known as the Alpha-Tocopherol, Beta-Carotene Trial, or ATBC), 32 percent fewer new cases of prostate cancer and 40 percent fewer deaths from prostate cancer were observed among men who took Vitamin E in the form of alpha-tocopherol to prevent lung cancer than among men who took a placebo.

So in 2001, the trial began enrolling subjects in a randomized, blinded, placebo-controlled prospective clinical trial to see if supplementation of selenium and Vitamin E, or a combination of the two, would reduce the incidence of prostate cancer. By 2004, over 35,000 men in the United States, Canada, and Puerto Rico had been enrolled. The subjects were randomly assigned to receive:

- Selenium and Vitamin E

- Selenium and a placebo

- Vitamin E and a placebo, or

- Two placebos. Two placebos were used in the trial: one looked like a selenium capsule; the other looked like a Vitamin E capsule. Each placebo contained only inactive ingredients. Neither the participants nor the researchers knew who received the selenium and Vitamin E, or the placebos, a process known as blinding or masking.

The trial was originally planned for between 7 and 12 years of supplementation and then subsequent followup to monitor for the development of prostate cancer, and it was designed to detect a 25% reduction in the incidence of prostate cancer, which was felt to be an achievable and clinically meaningful difference.

So How Did It Work Out?

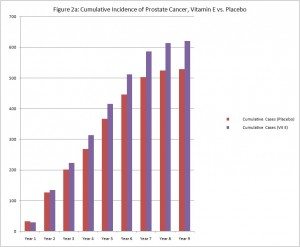

In 2008 the investigators reviewed the data up to that point and found no sign of a protective effect from selenium or Vitamin E, separately or taken together. It was judged unlikely that the 25% reduction in risk that was the criteria for effect in the study would be seen even if the study continued, so the supplementation portion of the study as halted. The subjects were told which supplement or placebo they had been given and were instructed to stop taking their supplements.

At that time, a greater number of cases of prostate cancer had developed in the group taking only Vitamin E than in the other groups. However, the difference did not reach statistical significance, so the investigators could not say whether it was a meaningful difference or only the result of chance.

Subsequently, the men have continued to be monitored, and the difference in the rate of prostate cancer between men who had been taking only Vitamin E supplements and the other men in the study has continued to grow even after they stopped taking the supplement. The difference is now statistically significant, so it likely represents a real biological phenomenon, not simply random chance. Men who were in the Vitamin E alone group have developed prostate cancer at a rate 17% higher than the men in the other groups.

Those men who took selenium alone or selenium and Vitamin E also have a higher rate of prostate cancer than those men who received the placebos, but this difference is not yet statistically significant. No other differences suggesting a beneficial or harmful effect of either supplement have been detected.

So Why Didn’t It Work Out As Expected?

From a biological point of view, the reason why Vitamin E supplementation turned out to be a risk factor for development or prostate cancer instead of a protective factor aren’t yet known. Nor is it clear why a statistically significant increase in risk hasn’t yet been found for those men who took Vitamin E and selenium together. There are, of course, theories as to how precisely Vitamin E influences prostate cancer risk, and the NCI has solicited research proposals from other investigators to use the data and samples collected in SELECT to investigate this question.

But from the point of view of epistemology, how we figure out what works and what doesn’t, there are a number of reasons why the finding shouldn’t be entirely surprising. For one thing, new ideas often turn out to be wrong. The degree of plausibility to the underlying theory and the amount of supportive pre-clinical research evidence are useful in determining roughly how promising an idea is, but ultimately the proof is in the pudding, with the pudding being multiple replicated independent high-quality randomized clinical trials. Given the incredible number of complex, interacting factors involved in the development of each particular disease, it shouldn’t surprise us when even a reasonable hypothesis with limited support from observational trials and other lower level evidence turns out to be false.

And in the case of this particular hypothesis, the preliminary evidence which first suggested the need for the SELECT in the first place didn’t work out so well either. As already mentions, “results of the [Nutritional Prevention of Cancer] trial showed that men who took selenium to prevent new non-melanoma skin cancers received no benefit from selenium in preventing that disease.” And there has since been some indication of potential harm, though the data is conflicting; “Since the start of SELECT, four studies have been published on the effect of selenium on blood glucose and risk of diabetes. Two studies suggested that higher levels of selenium taken from supplements or received naturally were associated with an increased risk of diabetes. One study showed no such association, and one showed that people with higher levels of selenium in their blood had a reduced risk of diabetes (3-6).”

As for Vitamin E, the NCI states, “There are no clinical trials that show a benefit from taking vitamin E to reduce the risk of prostate cancer or any other cancer or heart disease (7, 8, 9-13).” And the subjects in the HYPERLINK “http://www.cancer.gov/newscenter/qa/2003/atbcfollowupqa” ATBC trial who took Vitamin E had a 50% increase in the occurrence of hemorrhagic strokes, an 18% increase in the occurrence of lung cancer, and an 8% overall greater mortality than control subjects. So not only were the initial promising results suggesting a benefit for prostate cancer not correct, there were fewer benefits and greater risks than expected in terms of other diseases as well.

The Bottom Line

The moral of this story is simple and shouldn’t be controversial: While a plausible theory and suggestive preclinical evidence may suggest a new therapy could be beneficial, only multiple, rigorous, high-quality clinical trials can determine if this is actually true. Good ideas turn out to be wrong often enough that our initial enthusiasm with the ideas themselves and with supportive preliminary research data should always be tempered by an understanding that these lower levels of evidence are inherently less reliable than the slow, expensive, complicated process of rigorous clinical studies. And bad ideas, those without a plausible theoretical foundation or supportive pre-clinical evidence, are even less likely to bear fruit when properly examined.

Of course, such high-quality studies may not always be possible. As a veterinarian, I can never expect to have a 7-year prospective study of 35,000 subjects to rely on. So the temptation to act on lesser evidence is understandable. But therapies used without higher-quality evidence to support them are inevitably going to be more likely to fail or to cause harm than therapies that have been more thoroughly studied. We must accept this and factor that knowledge into our clinical decisions. If the need to intervene is great enough, it can be justifiable to employ therapies without high-quality clinical trial evidence to support them. However, we must convey the real degree of uncertainty to our patients or clients, and we must be especially vigilant and honest about potential failures or unintended consequences.

It is common to see sweeping and confident claims of benefit and safety for alternative therapies that, when examined, turn out to be based on suggestive evidence often much weaker than that which led the NCI to study the effect of selenium and Vitamin E on prostate cancer risk. CAM advocates have gotten quite good at throwing up publication references to support their claims, and the hassle factor involved in examining those papers in detail to see what level of evidence they actually provide can discourage anyone from thoroughly investigating these claims. This, of course, leaves the impression that there is good evidence to support what CAM advocates are saying, not only in the minds of the general public but in the minds of physicians, veterinarians, nurses, and others who should know better.

I think much of the success of the “integrative medicine” meme has been based on the lack of an adequate understanding among health professionals about the serious limitations of low-level evidence. The SELECT illustrates nicely how even a plausible intervention with enough low-level evidence to justify a major clinical trial can prove not only less helpful than originally hoped but even actively harmful. The same principle applies to an even greater degree to less plausible hypotheses. High-quality clinical trials are not simply icing on the cake confirming what we already know, they are the cake without which we know a lot less than we usually think.

References

- Clark LC, Combs GF Jr., Turnbull BW, et al. HYPERLINK “http://www.ncbi.nlm.nih.gov/pubmed/8971064” Effects of selenium supplementation for cancer prevention in patients with carcinoma of the skin. A randomized controlled trial: Nutritional Prevention of Cancer Study Group. JAMA 1996; 276(24):1957-1963

- Duffield-Lillico AJ, Reid ME, Turnbull BW, et al. HYPERLINK “http://www.ncbi.nlm.nih.gov/pubmed/12101110” Baseline characteristics and the effect of selenium supplementation on cancer incidence in a randomized clinical trial: A summary report of the Nutritional Prevention of Cancer Trial. Cancer Epidemiology, Biomarkers & Prevention 2002; 11(7):630-639.

- Stranges et al. HYPERLINK “http://www.annals.org/content/147/4/217” Effects of Long-Term Use of Selenium Supplements on the Incidence of Type 2 Diabetes. Annals of Internal Medicine; 147:217-233, 2007.

- Bleys J et al. HYPERLINK “http://www.ncbi.nlm.nih.gov/pubmed/17392543” Serum Selenium and Diabetes in U.S. Adults. Diabetes Care; 30:829-834, 2007.

- Rajpathak et al. HYPERLINK “http://www.ncbi.nlm.nih.gov/pubmed/16093402” Toenail Selenium and Cardiovascular Disease in Men with Diabetes. Journal of the American College of Nutrition; 24: 250-256, 2005.

- Czernichowet et al. HYPERLINK “http://www.ajcn.org/content/84/2/395.short” Antioxidant supplementation does not affect fasting plasma glucose in the Supplementation with Antioxidant Vitamins and Minerals (SU.VI.MAX) study in France: association with dietary intake and plasma concentrations. American Journal of Clinical Nutrition; 84:395-9, 2006.

- Lippman SM, Klein EA, Goodman PJ, et al. Effect of selenium and vitamin E on risk of prostate cancer and other cancers. JAMA 2009; 301(1). Published online December 9, 2008. Print edition January 2009.

- EA Klein, IM Thompson, CM Tangen, et al. Vitamin E and the Risk of Prostate Cancer: Results of The Selenium and Vitamin E Cancer Prevention Trial (SELECT). JAMA 2011; 306(14) 1549-1556.

- Yusuf S, Dagenais G, Pogue J, et al. Vitamin E supplementation and cardiovascular events in high risk patients. The Heart Outcomes Prevention Evaluation Study Investigators. New England Journal of Medicine. 2000;342:154-60.

- Sesso HD, Buring JE, Christen WG, et al. Vitamins E and C in the prevention of cardiovascular disease in men: the Physicians’ Health Study II randomized controlled trial. JAMA. 2008; 300(18):2123-33.

- Lee IM, Cook NR, Gaziano JM, et al. Vitamin E in the primary prevention of cardiovascular disease and cancer: the Women’s Health Study: a randomized controlled trial. JAMA. 2005; 294(1):56-65.

- Lonn E, Bosch J, Yusuf S, et al. Effects of long-term vitamin E supplementation on cardiovascular events and cancer: A randomized controlled trial. JAMA 2005; 293(11):1338-1347.

- Miller ER III, Pastor-Barriuso R, Dalal D, et al. Meta-analysis: High-dosage vitamin E supplementation may increase all-cause mortality. Annals of Internal Medicine 2005; 142(1):37-46.